|

| You could also click here to watch the demo video. |

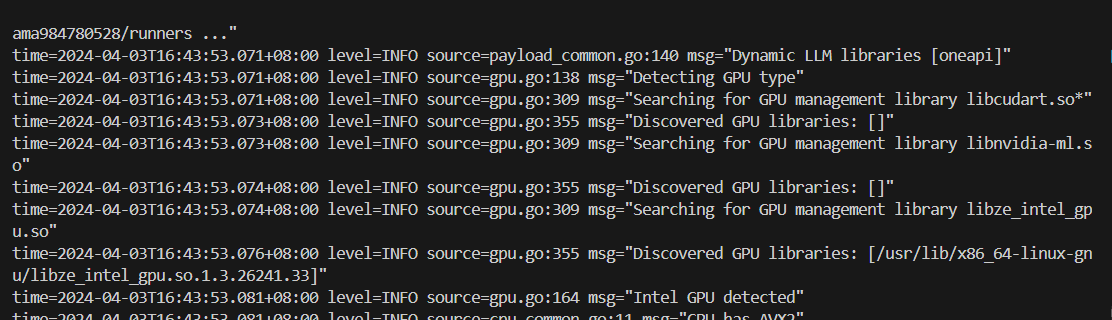

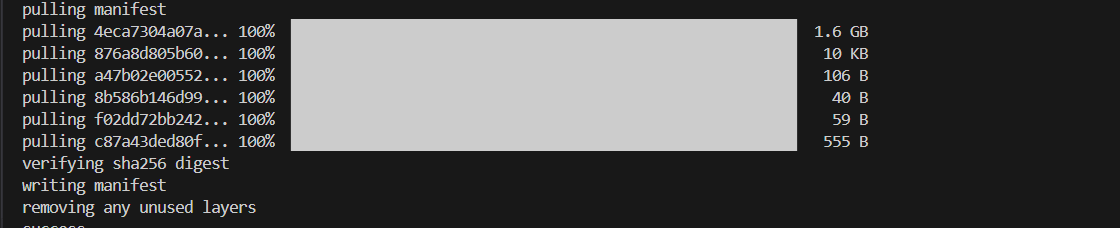

### 4. Pull Model

Keep the Ollama service on and open another terminal and run `./ollama pull

### 4. Pull Model

Keep the Ollama service on and open another terminal and run `./ollama pull  ### 5. Using Ollama

#### Using Curl

Using `curl` is the easiest way to verify the API service and model. Execute the following commands in a terminal. **Replace the

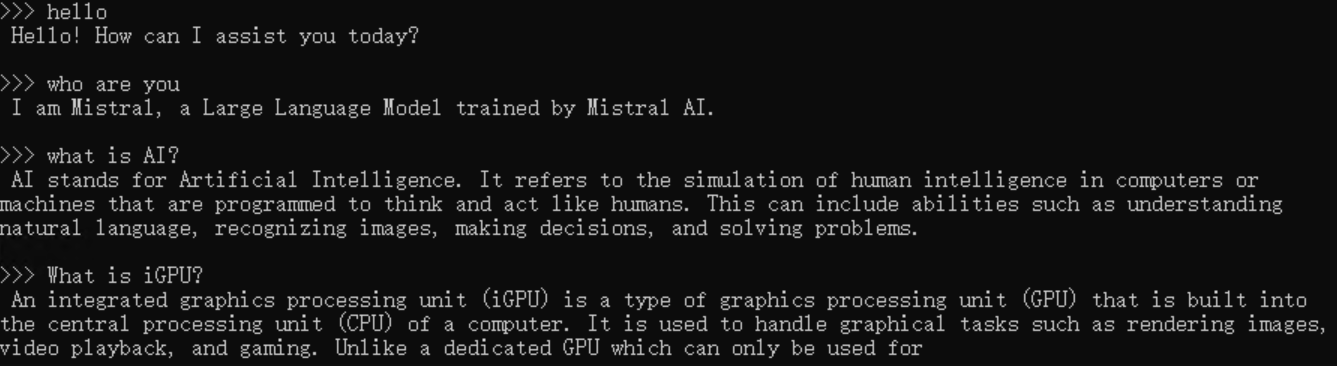

### 5. Using Ollama

#### Using Curl

Using `curl` is the easiest way to verify the API service and model. Execute the following commands in a terminal. **Replace the