diff --git a/README.md b/README.md

index ce84f188..ff02fb83 100644

--- a/README.md

+++ b/README.md

@@ -153,6 +153,7 @@ Over 20 models have been optimized/verified on `bigdl-llm`, including *LLaMA/LLa

| Whisper | [link](python/llm/example/CPU/HF-Transformers-AutoModels/Model/whisper) | [link](python/llm/example/GPU/HF-Transformers-AutoModels/Model/whisper) |

| Phi-1_5 | [link](python/llm/example/CPU/HF-Transformers-AutoModels/Model/phi-1_5) | [link](python/llm/example/GPU/HF-Transformers-AutoModels/Model/phi-1_5) |

| Flan-t5 | [link](python/llm/example/CPU/HF-Transformers-AutoModels/Model/flan-t5) | [link](python/llm/example/GPU/HF-Transformers-AutoModels/Model/flan-t5) |

+| Qwen-VL | [link](python/llm/example/CPU/HF-Transformers-AutoModels/Model/qwen-vl) | |

***For more details, please refer to the `bigdl-llm` [Document](https://test-bigdl-llm.readthedocs.io/en/main/doc/LLM/index.html), [Readme](python/llm), [Tutorial](https://github.com/intel-analytics/bigdl-llm-tutorial) and [API Doc](https://bigdl.readthedocs.io/en/latest/doc/PythonAPI/LLM/index.html).***

diff --git a/python/llm/README.md b/python/llm/README.md

index 4fcab1e4..6032a992 100644

--- a/python/llm/README.md

+++ b/python/llm/README.md

@@ -60,6 +60,7 @@ Over 20 models have been optimized/verified on `bigdl-llm`, including *LLaMA/LLa

| Whisper | [link](example/CPU/HF-Transformers-AutoModels/Model/whisper) | [link](example/GPU/HF-Transformers-AutoModels/Model/whisper) |

| Phi-1_5 | [link](example/CPU/HF-Transformers-AutoModels/Model/phi-1_5) | [link](example/GPU/HF-Transformers-AutoModels/Model/phi-1_5) |

| Flan-t5 | [link](example/CPU/HF-Transformers-AutoModels/Model/flan-t5) | [link](example/GPU/HF-Transformers-AutoModels/Model/flan-t5) |

+| Qwen-VL | [link](example/CPU/HF-Transformers-AutoModels/Model/qwen-vl) | |

### Working with `bigdl-llm`

diff --git a/python/llm/example/CPU/HF-Transformers-AutoModels/Model/README.md b/python/llm/example/CPU/HF-Transformers-AutoModels/Model/README.md

index 92cb9103..f7bd3b55 100644

--- a/python/llm/example/CPU/HF-Transformers-AutoModels/Model/README.md

+++ b/python/llm/example/CPU/HF-Transformers-AutoModels/Model/README.md

@@ -25,6 +25,8 @@ You can use BigDL-LLM to run any Huggingface Transformer models with INT4 optimi

| Replit | [link](replit) |

| Mistral | [link](mistral) |

| Flan-t5 | [link](flan-t5) |

+| Phi-1_5 | [link](phi-1_5) |

+| Qwen-VL | [link](qwen-vl) |

## Recommended Requirements

To run the examples, we recommend using Intel® Xeon® processors (server), or >= 12th Gen Intel® Core™ processor (client).

diff --git a/python/llm/example/CPU/HF-Transformers-AutoModels/Model/qwen-vl/README.md b/python/llm/example/CPU/HF-Transformers-AutoModels/Model/qwen-vl/README.md

new file mode 100644

index 00000000..8c04bbb6

--- /dev/null

+++ b/python/llm/example/CPU/HF-Transformers-AutoModels/Model/qwen-vl/README.md

@@ -0,0 +1,91 @@

+# Qwen-VL

+In this directory, you will find examples on how you could apply BigDL-LLM INT4 optimizations on Qwen-VL models. For illustration purposes, we utilize the [Qwen/Qwen-VL-Chat](https://huggingface.co/Qwen/Qwen-VL-Chat) as a reference Qwen-VL model.

+

+## Requirements

+To run these examples with BigDL-LLM, we have some recommended requirements for your machine, please refer to [here](../README.md#recommended-requirements) for more information.

+

+## Example: Multimodal chat using `chat()` API

+In the example [chat.py](./chat.py), we show a basic use case for a Qwen-VL model to start a multimodal chat using `chat()` API, with BigDL-LLM INT4 optimizations.

+### 1. Install

+We suggest using conda to manage the Python environment. For more information about conda installation, please refer to [here](https://docs.conda.io/en/latest/miniconda.html#).

+

+After installing conda, create a Python environment for BigDL-LLM:

+```bash

+conda create -n llm python=3.9 # recommend to use Python 3.9

+conda activate llm

+

+pip install --pre --upgrade bigdl-llm[all] # install the latest bigdl-llm nightly build with 'all' option

+

+pip install accelerate tiktoken einops transformers_stream_generator==0.0.4 scipy torchvision pillow tensorboard matplotlib # additional package required for Qwen-VL-Chat to conduct generation

+

+```

+

+### 2. Run

+After setting up the Python environment, you could run the example by following steps.

+

+#### 2.1 Client

+On client Windows machines, it is recommended to run directly with full utilization of all cores:

+```powershell

+python ./chat.py

+```

+More information about arguments can be found in [Arguments Info](#23-arguments-info) section. The expected output can be found in [Sample Output](#24-sample-output) section.

+

+#### 2.2 Server

+For optimal performance on server, it is recommended to set several environment variables (refer to [here](../README.md#best-known-configuration-on-linux) for more information), and run the example with all the physical cores of a single socket.

+

+E.g. on Linux,

+```bash

+# set BigDL-Nano env variables

+source bigdl-nano-init

+

+# e.g. for a server with 48 cores per socket

+export OMP_NUM_THREADS=48

+numactl -C 0-47 -m 0 python ./chat.py

+```

+More information about arguments can be found in [Arguments Info](#23-arguments-info) section. The expected output can be found in [Sample Output](#24-sample-output) section.

+

+#### 2.3 Arguments Info

+In the example, several arguments can be passed to satisfy your requirements:

+

+- `--repo-id-or-model-path`: str, argument defining the huggingface repo id for the Qwen-VL model to be downloaded, or the path to the huggingface checkpoint folder. It is default to be `'Qwen/Qwen-VL-Chat'`.

+- `--n-predict`: int, argument defining the max number of tokens to predict. It is default to be `32`.

+

+In every session, image and text can be entered into cmd (user can skip the input by type **'Enter'**) ; please type **'exit'** anytime you want to quit the dialouge.

+

+Every image output will be named as the round of session and placed under the current directory.

+

+#### 2.4 Sample Chat

+#### [Qwen/Qwen-VL-Chat](https://huggingface.co/Qwen/Qwen-VL-Chat)

+

+```log

+-------------------- Session 1 --------------------

+ Please input a picture: https://images.unsplash.com/photo-1533738363-b7f9aef128ce?auto=format&fit=crop&q=60&w=500&ixlib=rb-4.0.3&ixid=M3wxMjA3fDB8MHxzZWFyY2h8NHx8Y2F0fGVufDB8fDB8fHwy

+ Please enter the text: 这是什么

+---------- Response ----------

+图中是一只戴着墨镜的酷炫猫咪,正坐在窗边,看着窗外。

+

+-------------------- Session 2 --------------------

+ Please input a picture:

+ Please enter the text: 这只猫猫多大了?

+---------- Response ----------

+由于只猫猫戴着太阳镜,无法判断年龄,但可以猜测它应该是一只成年猫猫,已经成年。

+

+-------------------- Session 3 --------------------

+ Please input a picture:

+ Please enter the text: 在图中检测框出猫猫的墨镜

+---------- Response ----------

+[猫猫的墨镜](398,313),(994,506)

+

+-------------------- Session 4 --------------------

+ Please input a picture: exit

+```

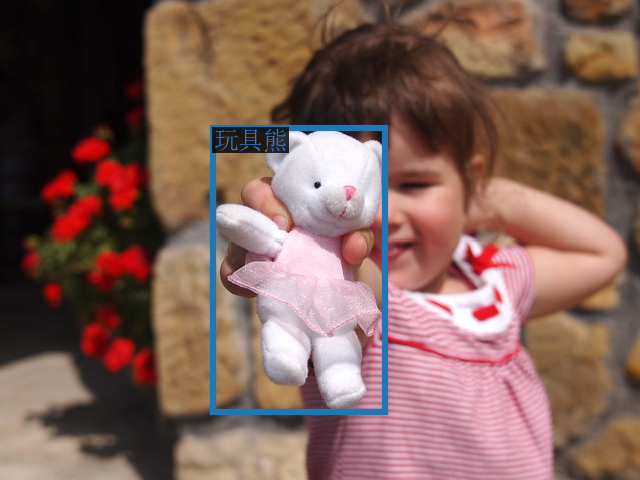

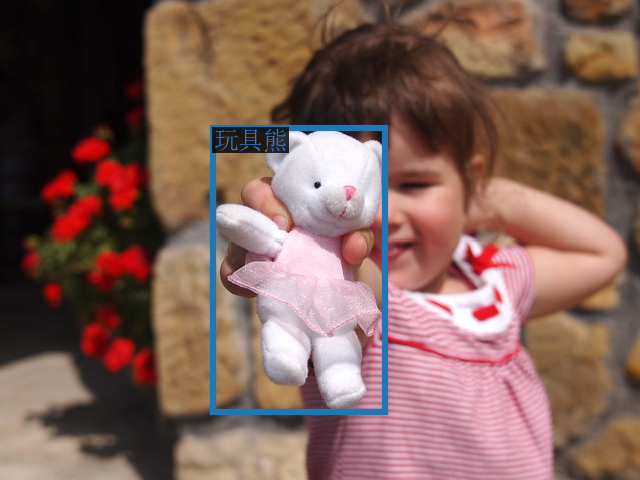

+The sample input image in Session 1 is (which is fetched from [here](https://images.unsplash.com/photo-1533738363-b7f9aef128ce?auto=format&fit=crop&q=60&w=500&ixlib=rb-4.0.3&ixid=M3wxMjA3fDB8MHxzZWFyY2h8NHx8Y2F0fGVufDB8fDB8fHwy)):

+

+ +

+The sample output image in Session 3 is:

+

+

+

+The sample output image in Session 3 is:

+

+ +

+

+

diff --git a/python/llm/example/CPU/HF-Transformers-AutoModels/Model/qwen-vl/chat.py b/python/llm/example/CPU/HF-Transformers-AutoModels/Model/qwen-vl/chat.py

new file mode 100644

index 00000000..6c017755

--- /dev/null

+++ b/python/llm/example/CPU/HF-Transformers-AutoModels/Model/qwen-vl/chat.py

@@ -0,0 +1,85 @@

+#

+# Copyright 2016 The BigDL Authors.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+#

+

+from bigdl.llm.transformers import AutoModel, AutoModelForCausalLM

+from transformers import AutoTokenizer, LlamaTokenizer

+from transformers.generation import GenerationConfig

+import torch

+import time

+import os

+import argparse

+from bigdl.llm import optimize_model

+torch.manual_seed(1234)

+

+if __name__ == '__main__':

+ parser = argparse.ArgumentParser(description='Predict Tokens using `chat()` API for Qwen-VL model')

+ parser.add_argument('--repo-id-or-model-path', type=str, default="Qwen/Qwen-VL-Chat",

+ help='The huggingface repo id for the Qwen-VL model to be downloaded'

+ ', or the path to the huggingface checkpoint folder')

+ parser.add_argument('--n-predict', type=int, default=32, help='Max tokens to predict')

+

+ current_path = os.path.dirname(os.path.abspath(__file__))

+ args = parser.parse_args()

+ model_path = args.repo_id_or_model_path

+

+ # Load model

+ # For successful BigDL-LLM optimization on Qwen-VL-Chat, skip the 'c_fc' and 'out_proj' modules during optimization

+ model = AutoModelForCausalLM.from_pretrained(model_path,

+ load_in_4bit=True,

+ device_map="cpu",

+ trust_remote_code=True,

+ modules_to_not_convert=['c_fc', 'out_proj'] )

+

+ # Specify hyperparameters for generation (No need to do this if you are using transformers>=4.32.0)

+ model.generation_config = GenerationConfig.from_pretrained(model_path, trust_remote_code=True)

+

+ # Load tokenizer

+ tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True)

+

+ # Session ID

+ session_id = 1

+

+ while True:

+ print('-'*20, 'Session %d' % session_id, '-'*20)

+ image_input = input(f' Please input a picture: ')

+ if image_input.lower() == 'exit' : # type 'exit' to quit the dialouge

+ break

+

+ text_input = input(f' Please enter the text: ')

+ if text_input.lower() == 'exit' : # type 'exit' to quit the dialouge

+ break

+

+ if session_id == 1:

+ history = None

+

+ all_input = [{'image': image_input}, {'text': text_input}]

+ input_list = [_input for _input in all_input if list(_input.values())[0] != '']

+

+ if len(input_list) == 0:

+ print("Input list should not be empty. Please try again with valid input.")

+ continue

+

+ query = tokenizer.from_list_format(input_list)

+ response, history = model.chat(tokenizer, query = query, history = history)

+

+ print('-'*10, 'Response', '-'*10)

+ print(response, '\n')

+

+ image = tokenizer.draw_bbox_on_latest_picture(response, history)

+ if image is not None:

+ image.save(os.path.join(current_path, f'Session_{session_id}.png'), )

+

+ session_id += 1

diff --git a/python/llm/example/CPU/PyTorch-Models/Model/README.md b/python/llm/example/CPU/PyTorch-Models/Model/README.md

index 3cee8c45..40288dd4 100644

--- a/python/llm/example/CPU/PyTorch-Models/Model/README.md

+++ b/python/llm/example/CPU/PyTorch-Models/Model/README.md

@@ -11,6 +11,8 @@ You can use `optimize_model` API to accelerate general PyTorch models on Intel s

| Bark | [link](bark) |

| Mistral | [link](mistral) |

| Flan-t5 | [link](flan-t5) |

+| Phi-1_5 | [link](phi-1_5) |

+| Qwen-VL | [link](qwen-vl) |

## Recommended Requirements

To run the examples, we recommend using Intel® Xeon® processors (server), or >= 12th Gen Intel® Core™ processor (client).

diff --git a/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/README.md b/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/README.md

new file mode 100644

index 00000000..444929ff

--- /dev/null

+++ b/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/README.md

@@ -0,0 +1,90 @@

+# Qwen-VL

+In this directory, you will find examples on how you could use BigDL-LLM `optimize_model` API to accelerate Qwen-VL models. For illustration purposes, we utilize the [Qwen/Qwen-VL-Chat](https://huggingface.co/Qwen/Qwen-VL-Chat) as a reference Qwen-VL model.

+

+## Requirements

+To run these examples with BigDL-LLM, we have some recommended requirements for your machine, please refer to [here](../README.md#recommended-requirements) for more information.

+

+## Example: Multimodal chat using `chat()` API

+In the example [chat.py](./chat.py), we show a basic use case for a Qwen-VL model to start a multimodal chat using `chat()` API, with BigDL-LLM 'optimize_model' API.

+### 1. Install

+We suggest using conda to manage the Python environment. For more information about conda installation, please refer to [here](https://docs.conda.io/en/latest/miniconda.html#).

+

+After installing conda, create a Python environment for BigDL-LLM:

+```bash

+conda create -n llm python=3.9 # recommend to use Python 3.9

+conda activate llm

+

+pip install --pre --upgrade bigdl-llm[all] # install the latest bigdl-llm nightly build with 'all' option

+

+pip install accelerate tiktoken einops transformers_stream_generator==0.0.4 scipy torchvision pillow tensorboard matplotlib # additional package required for Qwen-VL-Chat to conduct generation

+

+```

+

+### 2. Run

+After setting up the Python environment, you could run the example by following steps.

+

+#### 2.1 Client

+On client Windows machines, it is recommended to run directly with full utilization of all cores:

+```powershell

+python ./chat.py

+```

+More information about arguments can be found in [Arguments Info](#23-arguments-info) section. The expected output can be found in [Sample Output](#24-sample-output) section.

+

+#### 2.2 Server

+For optimal performance on server, it is recommended to set several environment variables (refer to [here](../README.md#best-known-configuration-on-linux) for more information), and run the example with all the physical cores of a single socket.

+

+E.g. on Linux,

+```bash

+# set BigDL-Nano env variables

+source bigdl-nano-init

+

+# e.g. for a server with 48 cores per socket

+export OMP_NUM_THREADS=48

+numactl -C 0-47 -m 0 python ./chat.py

+```

+More information about arguments can be found in [Arguments Info](#23-arguments-info) section. The expected output can be found in [Sample Output](#24-sample-output) section.

+

+#### 2.3 Arguments Info

+In the example, several arguments can be passed to satisfy your requirements:

+

+- `--repo-id-or-model-path`: str, argument defining the huggingface repo id for the Qwen-VL model to be downloaded, or the path to the huggingface checkpoint folder. It is default to be `'Qwen/Qwen-VL-Chat'`.

+- `--n-predict`: int, argument defining the max number of tokens to predict. It is default to be `32`.

+

+In every session, image and text can be entered into cmd (user can skip the input by type **'Enter'**) ; please type **'exit'** anytime you want to quit the dialouge.

+

+Every image output will be named as the round of session and placed under the current directory.

+

+#### 2.4 Sample Chat

+#### [Qwen/Qwen-VL-Chat](https://huggingface.co/Qwen/Qwen-VL-Chat)

+

+```log

+-------------------- Session 1 --------------------

+ Please input a picture: https://images.unsplash.com/photo-1533738363-b7f9aef128ce?auto=format&fit=crop&q=60&w=500&ixlib=rb-4.0.3&ixid=M3wxMjA3fDB8MHxzZWFyY2h8NHx8Y2F0fGVufDB8fDB8fHwy

+ Please enter the text: 这是什么

+---------- Response ----------

+图中是一只戴着墨镜的酷炫猫咪,正坐在窗边,看着窗外。

+

+-------------------- Session 2 --------------------

+ Please input a picture:

+ Please enter the text: 这只猫猫多大了?

+---------- Response ----------

+由于只猫猫戴着太阳镜,无法判断年龄,但可以猜测它应该是一只成年猫猫,已经成年。

+

+-------------------- Session 3 --------------------

+ Please input a picture:

+ Please enter the text: 在图中检测框出猫猫的墨镜

+---------- Response ----------

+

+

+

+

diff --git a/python/llm/example/CPU/HF-Transformers-AutoModels/Model/qwen-vl/chat.py b/python/llm/example/CPU/HF-Transformers-AutoModels/Model/qwen-vl/chat.py

new file mode 100644

index 00000000..6c017755

--- /dev/null

+++ b/python/llm/example/CPU/HF-Transformers-AutoModels/Model/qwen-vl/chat.py

@@ -0,0 +1,85 @@

+#

+# Copyright 2016 The BigDL Authors.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+#

+

+from bigdl.llm.transformers import AutoModel, AutoModelForCausalLM

+from transformers import AutoTokenizer, LlamaTokenizer

+from transformers.generation import GenerationConfig

+import torch

+import time

+import os

+import argparse

+from bigdl.llm import optimize_model

+torch.manual_seed(1234)

+

+if __name__ == '__main__':

+ parser = argparse.ArgumentParser(description='Predict Tokens using `chat()` API for Qwen-VL model')

+ parser.add_argument('--repo-id-or-model-path', type=str, default="Qwen/Qwen-VL-Chat",

+ help='The huggingface repo id for the Qwen-VL model to be downloaded'

+ ', or the path to the huggingface checkpoint folder')

+ parser.add_argument('--n-predict', type=int, default=32, help='Max tokens to predict')

+

+ current_path = os.path.dirname(os.path.abspath(__file__))

+ args = parser.parse_args()

+ model_path = args.repo_id_or_model_path

+

+ # Load model

+ # For successful BigDL-LLM optimization on Qwen-VL-Chat, skip the 'c_fc' and 'out_proj' modules during optimization

+ model = AutoModelForCausalLM.from_pretrained(model_path,

+ load_in_4bit=True,

+ device_map="cpu",

+ trust_remote_code=True,

+ modules_to_not_convert=['c_fc', 'out_proj'] )

+

+ # Specify hyperparameters for generation (No need to do this if you are using transformers>=4.32.0)

+ model.generation_config = GenerationConfig.from_pretrained(model_path, trust_remote_code=True)

+

+ # Load tokenizer

+ tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True)

+

+ # Session ID

+ session_id = 1

+

+ while True:

+ print('-'*20, 'Session %d' % session_id, '-'*20)

+ image_input = input(f' Please input a picture: ')

+ if image_input.lower() == 'exit' : # type 'exit' to quit the dialouge

+ break

+

+ text_input = input(f' Please enter the text: ')

+ if text_input.lower() == 'exit' : # type 'exit' to quit the dialouge

+ break

+

+ if session_id == 1:

+ history = None

+

+ all_input = [{'image': image_input}, {'text': text_input}]

+ input_list = [_input for _input in all_input if list(_input.values())[0] != '']

+

+ if len(input_list) == 0:

+ print("Input list should not be empty. Please try again with valid input.")

+ continue

+

+ query = tokenizer.from_list_format(input_list)

+ response, history = model.chat(tokenizer, query = query, history = history)

+

+ print('-'*10, 'Response', '-'*10)

+ print(response, '\n')

+

+ image = tokenizer.draw_bbox_on_latest_picture(response, history)

+ if image is not None:

+ image.save(os.path.join(current_path, f'Session_{session_id}.png'), )

+

+ session_id += 1

diff --git a/python/llm/example/CPU/PyTorch-Models/Model/README.md b/python/llm/example/CPU/PyTorch-Models/Model/README.md

index 3cee8c45..40288dd4 100644

--- a/python/llm/example/CPU/PyTorch-Models/Model/README.md

+++ b/python/llm/example/CPU/PyTorch-Models/Model/README.md

@@ -11,6 +11,8 @@ You can use `optimize_model` API to accelerate general PyTorch models on Intel s

| Bark | [link](bark) |

| Mistral | [link](mistral) |

| Flan-t5 | [link](flan-t5) |

+| Phi-1_5 | [link](phi-1_5) |

+| Qwen-VL | [link](qwen-vl) |

## Recommended Requirements

To run the examples, we recommend using Intel® Xeon® processors (server), or >= 12th Gen Intel® Core™ processor (client).

diff --git a/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/README.md b/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/README.md

new file mode 100644

index 00000000..444929ff

--- /dev/null

+++ b/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/README.md

@@ -0,0 +1,90 @@

+# Qwen-VL

+In this directory, you will find examples on how you could use BigDL-LLM `optimize_model` API to accelerate Qwen-VL models. For illustration purposes, we utilize the [Qwen/Qwen-VL-Chat](https://huggingface.co/Qwen/Qwen-VL-Chat) as a reference Qwen-VL model.

+

+## Requirements

+To run these examples with BigDL-LLM, we have some recommended requirements for your machine, please refer to [here](../README.md#recommended-requirements) for more information.

+

+## Example: Multimodal chat using `chat()` API

+In the example [chat.py](./chat.py), we show a basic use case for a Qwen-VL model to start a multimodal chat using `chat()` API, with BigDL-LLM 'optimize_model' API.

+### 1. Install

+We suggest using conda to manage the Python environment. For more information about conda installation, please refer to [here](https://docs.conda.io/en/latest/miniconda.html#).

+

+After installing conda, create a Python environment for BigDL-LLM:

+```bash

+conda create -n llm python=3.9 # recommend to use Python 3.9

+conda activate llm

+

+pip install --pre --upgrade bigdl-llm[all] # install the latest bigdl-llm nightly build with 'all' option

+

+pip install accelerate tiktoken einops transformers_stream_generator==0.0.4 scipy torchvision pillow tensorboard matplotlib # additional package required for Qwen-VL-Chat to conduct generation

+

+```

+

+### 2. Run

+After setting up the Python environment, you could run the example by following steps.

+

+#### 2.1 Client

+On client Windows machines, it is recommended to run directly with full utilization of all cores:

+```powershell

+python ./chat.py

+```

+More information about arguments can be found in [Arguments Info](#23-arguments-info) section. The expected output can be found in [Sample Output](#24-sample-output) section.

+

+#### 2.2 Server

+For optimal performance on server, it is recommended to set several environment variables (refer to [here](../README.md#best-known-configuration-on-linux) for more information), and run the example with all the physical cores of a single socket.

+

+E.g. on Linux,

+```bash

+# set BigDL-Nano env variables

+source bigdl-nano-init

+

+# e.g. for a server with 48 cores per socket

+export OMP_NUM_THREADS=48

+numactl -C 0-47 -m 0 python ./chat.py

+```

+More information about arguments can be found in [Arguments Info](#23-arguments-info) section. The expected output can be found in [Sample Output](#24-sample-output) section.

+

+#### 2.3 Arguments Info

+In the example, several arguments can be passed to satisfy your requirements:

+

+- `--repo-id-or-model-path`: str, argument defining the huggingface repo id for the Qwen-VL model to be downloaded, or the path to the huggingface checkpoint folder. It is default to be `'Qwen/Qwen-VL-Chat'`.

+- `--n-predict`: int, argument defining the max number of tokens to predict. It is default to be `32`.

+

+In every session, image and text can be entered into cmd (user can skip the input by type **'Enter'**) ; please type **'exit'** anytime you want to quit the dialouge.

+

+Every image output will be named as the round of session and placed under the current directory.

+

+#### 2.4 Sample Chat

+#### [Qwen/Qwen-VL-Chat](https://huggingface.co/Qwen/Qwen-VL-Chat)

+

+```log

+-------------------- Session 1 --------------------

+ Please input a picture: https://images.unsplash.com/photo-1533738363-b7f9aef128ce?auto=format&fit=crop&q=60&w=500&ixlib=rb-4.0.3&ixid=M3wxMjA3fDB8MHxzZWFyY2h8NHx8Y2F0fGVufDB8fDB8fHwy

+ Please enter the text: 这是什么

+---------- Response ----------

+图中是一只戴着墨镜的酷炫猫咪,正坐在窗边,看着窗外。

+

+-------------------- Session 2 --------------------

+ Please input a picture:

+ Please enter the text: 这只猫猫多大了?

+---------- Response ----------

+由于只猫猫戴着太阳镜,无法判断年龄,但可以猜测它应该是一只成年猫猫,已经成年。

+

+-------------------- Session 3 --------------------

+ Please input a picture:

+ Please enter the text: 在图中检测框出猫猫的墨镜

+---------- Response ----------

+[猫猫的墨镜](398,313),(994,506)

+

+-------------------- Session 4 --------------------

+ Please input a picture: exit

+```

+

+The sample input image in Session 1 is (which is fetched from [here](https://images.unsplash.com/photo-1533738363-b7f9aef128ce?auto=format&fit=crop&q=60&w=500&ixlib=rb-4.0.3&ixid=M3wxMjA3fDB8MHxzZWFyY2h8NHx8Y2F0fGVufDB8fDB8fHwy)):

+

+ +

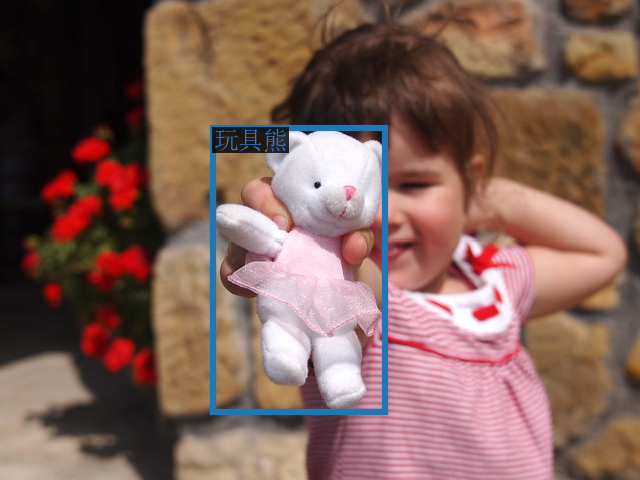

+The sample output image in Session 3 is:

+

+

+

+The sample output image in Session 3 is:

+

+ +

diff --git a/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/chat.py b/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/chat.py

new file mode 100644

index 00000000..5502a697

--- /dev/null

+++ b/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/chat.py

@@ -0,0 +1,85 @@

+#

+# Copyright 2016 The BigDL Authors.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+#

+

+from transformers import AutoModelForCausalLM, AutoTokenizer

+from transformers.generation import GenerationConfig

+import torch

+import time

+import os

+import argparse

+from bigdl.llm import optimize_model

+torch.manual_seed(1234)

+

+if __name__ == '__main__':

+ parser = argparse.ArgumentParser(description='Predict Tokens using `chat()` API for Qwen-VL model')

+ parser.add_argument('--repo-id-or-model-path', type=str, default="Qwen/Qwen-VL-Chat",

+ help='The huggingface repo id for the Qwen-VL model to be downloaded'

+ ', or the path to the huggingface checkpoint folder')

+ parser.add_argument('--n-predict', type=int, default=32, help='Max tokens to predict')

+

+ current_path = os.path.dirname(os.path.abspath(__file__))

+ args = parser.parse_args()

+ model_path = args.repo_id_or_model_path

+

+ # Load model

+ model = AutoModelForCausalLM.from_pretrained(model_path, device_map="cpu", trust_remote_code=True)

+

+ # With only one line to enable BigDL-LLM optimization on model

+ # For successful BigDL-LLM optimization on Qwen-VL-Chat, skip the 'c_fc' and 'out_proj' modules during optimization

+ model = optimize_model(model,

+ low_bit='sym_int4',

+ modules_to_not_convert=['c_fc', 'out_proj'])

+

+ # Specify hyperparameters for generation (No need to do this if you are using transformers>=4.32.0)

+ model.generation_config = GenerationConfig.from_pretrained(model_path, trust_remote_code=True)

+

+ # Load tokenizer

+ tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True)

+

+ # Session ID

+ session_id = 1

+

+ while True:

+ print('-'*20, 'Session %d' % session_id, '-'*20)

+ image_input = input(f' Please input a picture: ')

+ if image_input.lower() == 'exit' : # type 'exit' to quit the dialouge

+ break

+

+ text_input = input(f' Please enter the text: ')

+ if text_input.lower() == 'exit' : # type 'exit' to quit the dialouge

+ break

+

+ if session_id == 1:

+ history = None

+

+ all_input = [{'image': image_input}, {'text': text_input}]

+ input_list = [_input for _input in all_input if list(_input.values())[0] != '']

+

+ if len(input_list) == 0:

+ print("Input list should not be empty. Please try again with valid input.")

+ continue

+

+ query = tokenizer.from_list_format(input_list)

+ response, history = model.chat(tokenizer, query = query, history = history)

+

+ print('-'*10, 'Response', '-'*10)

+ print(response, '\n')

+

+ image = tokenizer.draw_bbox_on_latest_picture(response, history)

+ if image is not None:

+ image.save(os.path.join(current_path, f'Session_{session_id}.png'), )

+

+ session_id += 1

+

diff --git a/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/chat.py b/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/chat.py

new file mode 100644

index 00000000..5502a697

--- /dev/null

+++ b/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/chat.py

@@ -0,0 +1,85 @@

+#

+# Copyright 2016 The BigDL Authors.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+#

+

+from transformers import AutoModelForCausalLM, AutoTokenizer

+from transformers.generation import GenerationConfig

+import torch

+import time

+import os

+import argparse

+from bigdl.llm import optimize_model

+torch.manual_seed(1234)

+

+if __name__ == '__main__':

+ parser = argparse.ArgumentParser(description='Predict Tokens using `chat()` API for Qwen-VL model')

+ parser.add_argument('--repo-id-or-model-path', type=str, default="Qwen/Qwen-VL-Chat",

+ help='The huggingface repo id for the Qwen-VL model to be downloaded'

+ ', or the path to the huggingface checkpoint folder')

+ parser.add_argument('--n-predict', type=int, default=32, help='Max tokens to predict')

+

+ current_path = os.path.dirname(os.path.abspath(__file__))

+ args = parser.parse_args()

+ model_path = args.repo_id_or_model_path

+

+ # Load model

+ model = AutoModelForCausalLM.from_pretrained(model_path, device_map="cpu", trust_remote_code=True)

+

+ # With only one line to enable BigDL-LLM optimization on model

+ # For successful BigDL-LLM optimization on Qwen-VL-Chat, skip the 'c_fc' and 'out_proj' modules during optimization

+ model = optimize_model(model,

+ low_bit='sym_int4',

+ modules_to_not_convert=['c_fc', 'out_proj'])

+

+ # Specify hyperparameters for generation (No need to do this if you are using transformers>=4.32.0)

+ model.generation_config = GenerationConfig.from_pretrained(model_path, trust_remote_code=True)

+

+ # Load tokenizer

+ tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True)

+

+ # Session ID

+ session_id = 1

+

+ while True:

+ print('-'*20, 'Session %d' % session_id, '-'*20)

+ image_input = input(f' Please input a picture: ')

+ if image_input.lower() == 'exit' : # type 'exit' to quit the dialouge

+ break

+

+ text_input = input(f' Please enter the text: ')

+ if text_input.lower() == 'exit' : # type 'exit' to quit the dialouge

+ break

+

+ if session_id == 1:

+ history = None

+

+ all_input = [{'image': image_input}, {'text': text_input}]

+ input_list = [_input for _input in all_input if list(_input.values())[0] != '']

+

+ if len(input_list) == 0:

+ print("Input list should not be empty. Please try again with valid input.")

+ continue

+

+ query = tokenizer.from_list_format(input_list)

+ response, history = model.chat(tokenizer, query = query, history = history)

+

+ print('-'*10, 'Response', '-'*10)

+ print(response, '\n')

+

+ image = tokenizer.draw_bbox_on_latest_picture(response, history)

+ if image is not None:

+ image.save(os.path.join(current_path, f'Session_{session_id}.png'), )

+

+ session_id += 1

+

+The sample output image in Session 3 is:

+

+

+

+The sample output image in Session 3 is:

+

+ +

+

+

diff --git a/python/llm/example/CPU/HF-Transformers-AutoModels/Model/qwen-vl/chat.py b/python/llm/example/CPU/HF-Transformers-AutoModels/Model/qwen-vl/chat.py

new file mode 100644

index 00000000..6c017755

--- /dev/null

+++ b/python/llm/example/CPU/HF-Transformers-AutoModels/Model/qwen-vl/chat.py

@@ -0,0 +1,85 @@

+#

+# Copyright 2016 The BigDL Authors.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+#

+

+from bigdl.llm.transformers import AutoModel, AutoModelForCausalLM

+from transformers import AutoTokenizer, LlamaTokenizer

+from transformers.generation import GenerationConfig

+import torch

+import time

+import os

+import argparse

+from bigdl.llm import optimize_model

+torch.manual_seed(1234)

+

+if __name__ == '__main__':

+ parser = argparse.ArgumentParser(description='Predict Tokens using `chat()` API for Qwen-VL model')

+ parser.add_argument('--repo-id-or-model-path', type=str, default="Qwen/Qwen-VL-Chat",

+ help='The huggingface repo id for the Qwen-VL model to be downloaded'

+ ', or the path to the huggingface checkpoint folder')

+ parser.add_argument('--n-predict', type=int, default=32, help='Max tokens to predict')

+

+ current_path = os.path.dirname(os.path.abspath(__file__))

+ args = parser.parse_args()

+ model_path = args.repo_id_or_model_path

+

+ # Load model

+ # For successful BigDL-LLM optimization on Qwen-VL-Chat, skip the 'c_fc' and 'out_proj' modules during optimization

+ model = AutoModelForCausalLM.from_pretrained(model_path,

+ load_in_4bit=True,

+ device_map="cpu",

+ trust_remote_code=True,

+ modules_to_not_convert=['c_fc', 'out_proj'] )

+

+ # Specify hyperparameters for generation (No need to do this if you are using transformers>=4.32.0)

+ model.generation_config = GenerationConfig.from_pretrained(model_path, trust_remote_code=True)

+

+ # Load tokenizer

+ tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True)

+

+ # Session ID

+ session_id = 1

+

+ while True:

+ print('-'*20, 'Session %d' % session_id, '-'*20)

+ image_input = input(f' Please input a picture: ')

+ if image_input.lower() == 'exit' : # type 'exit' to quit the dialouge

+ break

+

+ text_input = input(f' Please enter the text: ')

+ if text_input.lower() == 'exit' : # type 'exit' to quit the dialouge

+ break

+

+ if session_id == 1:

+ history = None

+

+ all_input = [{'image': image_input}, {'text': text_input}]

+ input_list = [_input for _input in all_input if list(_input.values())[0] != '']

+

+ if len(input_list) == 0:

+ print("Input list should not be empty. Please try again with valid input.")

+ continue

+

+ query = tokenizer.from_list_format(input_list)

+ response, history = model.chat(tokenizer, query = query, history = history)

+

+ print('-'*10, 'Response', '-'*10)

+ print(response, '\n')

+

+ image = tokenizer.draw_bbox_on_latest_picture(response, history)

+ if image is not None:

+ image.save(os.path.join(current_path, f'Session_{session_id}.png'), )

+

+ session_id += 1

diff --git a/python/llm/example/CPU/PyTorch-Models/Model/README.md b/python/llm/example/CPU/PyTorch-Models/Model/README.md

index 3cee8c45..40288dd4 100644

--- a/python/llm/example/CPU/PyTorch-Models/Model/README.md

+++ b/python/llm/example/CPU/PyTorch-Models/Model/README.md

@@ -11,6 +11,8 @@ You can use `optimize_model` API to accelerate general PyTorch models on Intel s

| Bark | [link](bark) |

| Mistral | [link](mistral) |

| Flan-t5 | [link](flan-t5) |

+| Phi-1_5 | [link](phi-1_5) |

+| Qwen-VL | [link](qwen-vl) |

## Recommended Requirements

To run the examples, we recommend using Intel® Xeon® processors (server), or >= 12th Gen Intel® Core™ processor (client).

diff --git a/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/README.md b/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/README.md

new file mode 100644

index 00000000..444929ff

--- /dev/null

+++ b/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/README.md

@@ -0,0 +1,90 @@

+# Qwen-VL

+In this directory, you will find examples on how you could use BigDL-LLM `optimize_model` API to accelerate Qwen-VL models. For illustration purposes, we utilize the [Qwen/Qwen-VL-Chat](https://huggingface.co/Qwen/Qwen-VL-Chat) as a reference Qwen-VL model.

+

+## Requirements

+To run these examples with BigDL-LLM, we have some recommended requirements for your machine, please refer to [here](../README.md#recommended-requirements) for more information.

+

+## Example: Multimodal chat using `chat()` API

+In the example [chat.py](./chat.py), we show a basic use case for a Qwen-VL model to start a multimodal chat using `chat()` API, with BigDL-LLM 'optimize_model' API.

+### 1. Install

+We suggest using conda to manage the Python environment. For more information about conda installation, please refer to [here](https://docs.conda.io/en/latest/miniconda.html#).

+

+After installing conda, create a Python environment for BigDL-LLM:

+```bash

+conda create -n llm python=3.9 # recommend to use Python 3.9

+conda activate llm

+

+pip install --pre --upgrade bigdl-llm[all] # install the latest bigdl-llm nightly build with 'all' option

+

+pip install accelerate tiktoken einops transformers_stream_generator==0.0.4 scipy torchvision pillow tensorboard matplotlib # additional package required for Qwen-VL-Chat to conduct generation

+

+```

+

+### 2. Run

+After setting up the Python environment, you could run the example by following steps.

+

+#### 2.1 Client

+On client Windows machines, it is recommended to run directly with full utilization of all cores:

+```powershell

+python ./chat.py

+```

+More information about arguments can be found in [Arguments Info](#23-arguments-info) section. The expected output can be found in [Sample Output](#24-sample-output) section.

+

+#### 2.2 Server

+For optimal performance on server, it is recommended to set several environment variables (refer to [here](../README.md#best-known-configuration-on-linux) for more information), and run the example with all the physical cores of a single socket.

+

+E.g. on Linux,

+```bash

+# set BigDL-Nano env variables

+source bigdl-nano-init

+

+# e.g. for a server with 48 cores per socket

+export OMP_NUM_THREADS=48

+numactl -C 0-47 -m 0 python ./chat.py

+```

+More information about arguments can be found in [Arguments Info](#23-arguments-info) section. The expected output can be found in [Sample Output](#24-sample-output) section.

+

+#### 2.3 Arguments Info

+In the example, several arguments can be passed to satisfy your requirements:

+

+- `--repo-id-or-model-path`: str, argument defining the huggingface repo id for the Qwen-VL model to be downloaded, or the path to the huggingface checkpoint folder. It is default to be `'Qwen/Qwen-VL-Chat'`.

+- `--n-predict`: int, argument defining the max number of tokens to predict. It is default to be `32`.

+

+In every session, image and text can be entered into cmd (user can skip the input by type **'Enter'**) ; please type **'exit'** anytime you want to quit the dialouge.

+

+Every image output will be named as the round of session and placed under the current directory.

+

+#### 2.4 Sample Chat

+#### [Qwen/Qwen-VL-Chat](https://huggingface.co/Qwen/Qwen-VL-Chat)

+

+```log

+-------------------- Session 1 --------------------

+ Please input a picture: https://images.unsplash.com/photo-1533738363-b7f9aef128ce?auto=format&fit=crop&q=60&w=500&ixlib=rb-4.0.3&ixid=M3wxMjA3fDB8MHxzZWFyY2h8NHx8Y2F0fGVufDB8fDB8fHwy

+ Please enter the text: 这是什么

+---------- Response ----------

+图中是一只戴着墨镜的酷炫猫咪,正坐在窗边,看着窗外。

+

+-------------------- Session 2 --------------------

+ Please input a picture:

+ Please enter the text: 这只猫猫多大了?

+---------- Response ----------

+由于只猫猫戴着太阳镜,无法判断年龄,但可以猜测它应该是一只成年猫猫,已经成年。

+

+-------------------- Session 3 --------------------

+ Please input a picture:

+ Please enter the text: 在图中检测框出猫猫的墨镜

+---------- Response ----------

+猫猫的墨镜

+

+

+

diff --git a/python/llm/example/CPU/HF-Transformers-AutoModels/Model/qwen-vl/chat.py b/python/llm/example/CPU/HF-Transformers-AutoModels/Model/qwen-vl/chat.py

new file mode 100644

index 00000000..6c017755

--- /dev/null

+++ b/python/llm/example/CPU/HF-Transformers-AutoModels/Model/qwen-vl/chat.py

@@ -0,0 +1,85 @@

+#

+# Copyright 2016 The BigDL Authors.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+#

+

+from bigdl.llm.transformers import AutoModel, AutoModelForCausalLM

+from transformers import AutoTokenizer, LlamaTokenizer

+from transformers.generation import GenerationConfig

+import torch

+import time

+import os

+import argparse

+from bigdl.llm import optimize_model

+torch.manual_seed(1234)

+

+if __name__ == '__main__':

+ parser = argparse.ArgumentParser(description='Predict Tokens using `chat()` API for Qwen-VL model')

+ parser.add_argument('--repo-id-or-model-path', type=str, default="Qwen/Qwen-VL-Chat",

+ help='The huggingface repo id for the Qwen-VL model to be downloaded'

+ ', or the path to the huggingface checkpoint folder')

+ parser.add_argument('--n-predict', type=int, default=32, help='Max tokens to predict')

+

+ current_path = os.path.dirname(os.path.abspath(__file__))

+ args = parser.parse_args()

+ model_path = args.repo_id_or_model_path

+

+ # Load model

+ # For successful BigDL-LLM optimization on Qwen-VL-Chat, skip the 'c_fc' and 'out_proj' modules during optimization

+ model = AutoModelForCausalLM.from_pretrained(model_path,

+ load_in_4bit=True,

+ device_map="cpu",

+ trust_remote_code=True,

+ modules_to_not_convert=['c_fc', 'out_proj'] )

+

+ # Specify hyperparameters for generation (No need to do this if you are using transformers>=4.32.0)

+ model.generation_config = GenerationConfig.from_pretrained(model_path, trust_remote_code=True)

+

+ # Load tokenizer

+ tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True)

+

+ # Session ID

+ session_id = 1

+

+ while True:

+ print('-'*20, 'Session %d' % session_id, '-'*20)

+ image_input = input(f' Please input a picture: ')

+ if image_input.lower() == 'exit' : # type 'exit' to quit the dialouge

+ break

+

+ text_input = input(f' Please enter the text: ')

+ if text_input.lower() == 'exit' : # type 'exit' to quit the dialouge

+ break

+

+ if session_id == 1:

+ history = None

+

+ all_input = [{'image': image_input}, {'text': text_input}]

+ input_list = [_input for _input in all_input if list(_input.values())[0] != '']

+

+ if len(input_list) == 0:

+ print("Input list should not be empty. Please try again with valid input.")

+ continue

+

+ query = tokenizer.from_list_format(input_list)

+ response, history = model.chat(tokenizer, query = query, history = history)

+

+ print('-'*10, 'Response', '-'*10)

+ print(response, '\n')

+

+ image = tokenizer.draw_bbox_on_latest_picture(response, history)

+ if image is not None:

+ image.save(os.path.join(current_path, f'Session_{session_id}.png'), )

+

+ session_id += 1

diff --git a/python/llm/example/CPU/PyTorch-Models/Model/README.md b/python/llm/example/CPU/PyTorch-Models/Model/README.md

index 3cee8c45..40288dd4 100644

--- a/python/llm/example/CPU/PyTorch-Models/Model/README.md

+++ b/python/llm/example/CPU/PyTorch-Models/Model/README.md

@@ -11,6 +11,8 @@ You can use `optimize_model` API to accelerate general PyTorch models on Intel s

| Bark | [link](bark) |

| Mistral | [link](mistral) |

| Flan-t5 | [link](flan-t5) |

+| Phi-1_5 | [link](phi-1_5) |

+| Qwen-VL | [link](qwen-vl) |

## Recommended Requirements

To run the examples, we recommend using Intel® Xeon® processors (server), or >= 12th Gen Intel® Core™ processor (client).

diff --git a/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/README.md b/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/README.md

new file mode 100644

index 00000000..444929ff

--- /dev/null

+++ b/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/README.md

@@ -0,0 +1,90 @@

+# Qwen-VL

+In this directory, you will find examples on how you could use BigDL-LLM `optimize_model` API to accelerate Qwen-VL models. For illustration purposes, we utilize the [Qwen/Qwen-VL-Chat](https://huggingface.co/Qwen/Qwen-VL-Chat) as a reference Qwen-VL model.

+

+## Requirements

+To run these examples with BigDL-LLM, we have some recommended requirements for your machine, please refer to [here](../README.md#recommended-requirements) for more information.

+

+## Example: Multimodal chat using `chat()` API

+In the example [chat.py](./chat.py), we show a basic use case for a Qwen-VL model to start a multimodal chat using `chat()` API, with BigDL-LLM 'optimize_model' API.

+### 1. Install

+We suggest using conda to manage the Python environment. For more information about conda installation, please refer to [here](https://docs.conda.io/en/latest/miniconda.html#).

+

+After installing conda, create a Python environment for BigDL-LLM:

+```bash

+conda create -n llm python=3.9 # recommend to use Python 3.9

+conda activate llm

+

+pip install --pre --upgrade bigdl-llm[all] # install the latest bigdl-llm nightly build with 'all' option

+

+pip install accelerate tiktoken einops transformers_stream_generator==0.0.4 scipy torchvision pillow tensorboard matplotlib # additional package required for Qwen-VL-Chat to conduct generation

+

+```

+

+### 2. Run

+After setting up the Python environment, you could run the example by following steps.

+

+#### 2.1 Client

+On client Windows machines, it is recommended to run directly with full utilization of all cores:

+```powershell

+python ./chat.py

+```

+More information about arguments can be found in [Arguments Info](#23-arguments-info) section. The expected output can be found in [Sample Output](#24-sample-output) section.

+

+#### 2.2 Server

+For optimal performance on server, it is recommended to set several environment variables (refer to [here](../README.md#best-known-configuration-on-linux) for more information), and run the example with all the physical cores of a single socket.

+

+E.g. on Linux,

+```bash

+# set BigDL-Nano env variables

+source bigdl-nano-init

+

+# e.g. for a server with 48 cores per socket

+export OMP_NUM_THREADS=48

+numactl -C 0-47 -m 0 python ./chat.py

+```

+More information about arguments can be found in [Arguments Info](#23-arguments-info) section. The expected output can be found in [Sample Output](#24-sample-output) section.

+

+#### 2.3 Arguments Info

+In the example, several arguments can be passed to satisfy your requirements:

+

+- `--repo-id-or-model-path`: str, argument defining the huggingface repo id for the Qwen-VL model to be downloaded, or the path to the huggingface checkpoint folder. It is default to be `'Qwen/Qwen-VL-Chat'`.

+- `--n-predict`: int, argument defining the max number of tokens to predict. It is default to be `32`.

+

+In every session, image and text can be entered into cmd (user can skip the input by type **'Enter'**) ; please type **'exit'** anytime you want to quit the dialouge.

+

+Every image output will be named as the round of session and placed under the current directory.

+

+#### 2.4 Sample Chat

+#### [Qwen/Qwen-VL-Chat](https://huggingface.co/Qwen/Qwen-VL-Chat)

+

+```log

+-------------------- Session 1 --------------------

+ Please input a picture: https://images.unsplash.com/photo-1533738363-b7f9aef128ce?auto=format&fit=crop&q=60&w=500&ixlib=rb-4.0.3&ixid=M3wxMjA3fDB8MHxzZWFyY2h8NHx8Y2F0fGVufDB8fDB8fHwy

+ Please enter the text: 这是什么

+---------- Response ----------

+图中是一只戴着墨镜的酷炫猫咪,正坐在窗边,看着窗外。

+

+-------------------- Session 2 --------------------

+ Please input a picture:

+ Please enter the text: 这只猫猫多大了?

+---------- Response ----------

+由于只猫猫戴着太阳镜,无法判断年龄,但可以猜测它应该是一只成年猫猫,已经成年。

+

+-------------------- Session 3 --------------------

+ Please input a picture:

+ Please enter the text: 在图中检测框出猫猫的墨镜

+---------- Response ----------

+猫猫的墨镜 +

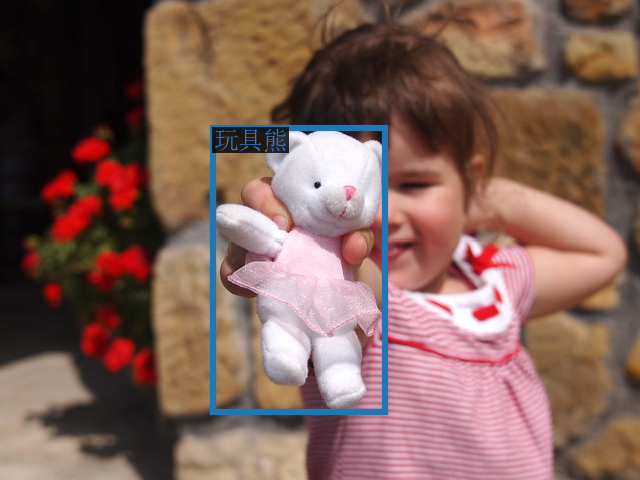

+The sample output image in Session 3 is:

+

+

+

+The sample output image in Session 3 is:

+

+ +

diff --git a/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/chat.py b/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/chat.py

new file mode 100644

index 00000000..5502a697

--- /dev/null

+++ b/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/chat.py

@@ -0,0 +1,85 @@

+#

+# Copyright 2016 The BigDL Authors.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+#

+

+from transformers import AutoModelForCausalLM, AutoTokenizer

+from transformers.generation import GenerationConfig

+import torch

+import time

+import os

+import argparse

+from bigdl.llm import optimize_model

+torch.manual_seed(1234)

+

+if __name__ == '__main__':

+ parser = argparse.ArgumentParser(description='Predict Tokens using `chat()` API for Qwen-VL model')

+ parser.add_argument('--repo-id-or-model-path', type=str, default="Qwen/Qwen-VL-Chat",

+ help='The huggingface repo id for the Qwen-VL model to be downloaded'

+ ', or the path to the huggingface checkpoint folder')

+ parser.add_argument('--n-predict', type=int, default=32, help='Max tokens to predict')

+

+ current_path = os.path.dirname(os.path.abspath(__file__))

+ args = parser.parse_args()

+ model_path = args.repo_id_or_model_path

+

+ # Load model

+ model = AutoModelForCausalLM.from_pretrained(model_path, device_map="cpu", trust_remote_code=True)

+

+ # With only one line to enable BigDL-LLM optimization on model

+ # For successful BigDL-LLM optimization on Qwen-VL-Chat, skip the 'c_fc' and 'out_proj' modules during optimization

+ model = optimize_model(model,

+ low_bit='sym_int4',

+ modules_to_not_convert=['c_fc', 'out_proj'])

+

+ # Specify hyperparameters for generation (No need to do this if you are using transformers>=4.32.0)

+ model.generation_config = GenerationConfig.from_pretrained(model_path, trust_remote_code=True)

+

+ # Load tokenizer

+ tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True)

+

+ # Session ID

+ session_id = 1

+

+ while True:

+ print('-'*20, 'Session %d' % session_id, '-'*20)

+ image_input = input(f' Please input a picture: ')

+ if image_input.lower() == 'exit' : # type 'exit' to quit the dialouge

+ break

+

+ text_input = input(f' Please enter the text: ')

+ if text_input.lower() == 'exit' : # type 'exit' to quit the dialouge

+ break

+

+ if session_id == 1:

+ history = None

+

+ all_input = [{'image': image_input}, {'text': text_input}]

+ input_list = [_input for _input in all_input if list(_input.values())[0] != '']

+

+ if len(input_list) == 0:

+ print("Input list should not be empty. Please try again with valid input.")

+ continue

+

+ query = tokenizer.from_list_format(input_list)

+ response, history = model.chat(tokenizer, query = query, history = history)

+

+ print('-'*10, 'Response', '-'*10)

+ print(response, '\n')

+

+ image = tokenizer.draw_bbox_on_latest_picture(response, history)

+ if image is not None:

+ image.save(os.path.join(current_path, f'Session_{session_id}.png'), )

+

+ session_id += 1

+

diff --git a/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/chat.py b/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/chat.py

new file mode 100644

index 00000000..5502a697

--- /dev/null

+++ b/python/llm/example/CPU/PyTorch-Models/Model/qwen-vl/chat.py

@@ -0,0 +1,85 @@

+#

+# Copyright 2016 The BigDL Authors.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+#

+

+from transformers import AutoModelForCausalLM, AutoTokenizer

+from transformers.generation import GenerationConfig

+import torch

+import time

+import os

+import argparse

+from bigdl.llm import optimize_model

+torch.manual_seed(1234)

+

+if __name__ == '__main__':

+ parser = argparse.ArgumentParser(description='Predict Tokens using `chat()` API for Qwen-VL model')

+ parser.add_argument('--repo-id-or-model-path', type=str, default="Qwen/Qwen-VL-Chat",

+ help='The huggingface repo id for the Qwen-VL model to be downloaded'

+ ', or the path to the huggingface checkpoint folder')

+ parser.add_argument('--n-predict', type=int, default=32, help='Max tokens to predict')

+

+ current_path = os.path.dirname(os.path.abspath(__file__))

+ args = parser.parse_args()

+ model_path = args.repo_id_or_model_path

+

+ # Load model

+ model = AutoModelForCausalLM.from_pretrained(model_path, device_map="cpu", trust_remote_code=True)

+

+ # With only one line to enable BigDL-LLM optimization on model

+ # For successful BigDL-LLM optimization on Qwen-VL-Chat, skip the 'c_fc' and 'out_proj' modules during optimization

+ model = optimize_model(model,

+ low_bit='sym_int4',

+ modules_to_not_convert=['c_fc', 'out_proj'])

+

+ # Specify hyperparameters for generation (No need to do this if you are using transformers>=4.32.0)

+ model.generation_config = GenerationConfig.from_pretrained(model_path, trust_remote_code=True)

+

+ # Load tokenizer

+ tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True)

+

+ # Session ID

+ session_id = 1

+

+ while True:

+ print('-'*20, 'Session %d' % session_id, '-'*20)

+ image_input = input(f' Please input a picture: ')

+ if image_input.lower() == 'exit' : # type 'exit' to quit the dialouge

+ break

+

+ text_input = input(f' Please enter the text: ')

+ if text_input.lower() == 'exit' : # type 'exit' to quit the dialouge

+ break

+

+ if session_id == 1:

+ history = None

+

+ all_input = [{'image': image_input}, {'text': text_input}]

+ input_list = [_input for _input in all_input if list(_input.values())[0] != '']

+

+ if len(input_list) == 0:

+ print("Input list should not be empty. Please try again with valid input.")

+ continue

+

+ query = tokenizer.from_list_format(input_list)

+ response, history = model.chat(tokenizer, query = query, history = history)

+

+ print('-'*10, 'Response', '-'*10)

+ print(response, '\n')

+

+ image = tokenizer.draw_bbox_on_latest_picture(response, history)

+ if image is not None:

+ image.save(os.path.join(current_path, f'Session_{session_id}.png'), )

+

+ session_id += 1