-

-For Linux:

+- For **Linux users**: ```bash @@ -69,10 +68,8 @@ Start ipex-llm-xpu Docker Container. Choose one of the following commands to sta -v $MODEL_PATH:/llm/models \ $DOCKER_IMAGE ``` -

-

----

## Run/Develop Pytorch Examples

@@ -108,10 +103,11 @@ Now you are in a running Docker Container, Open folder `/ipex-llm/python/llm/exa

In this folder, we provide several PyTorch examples that you could apply IPEX-LLM INT4 optimizations on models on Intel GPUs.

For example, if your model is Llama-2-7b-chat-hf and mounted on /llm/models, you can navigate to llama2 directory, excute the following command to run example:

- ```bash

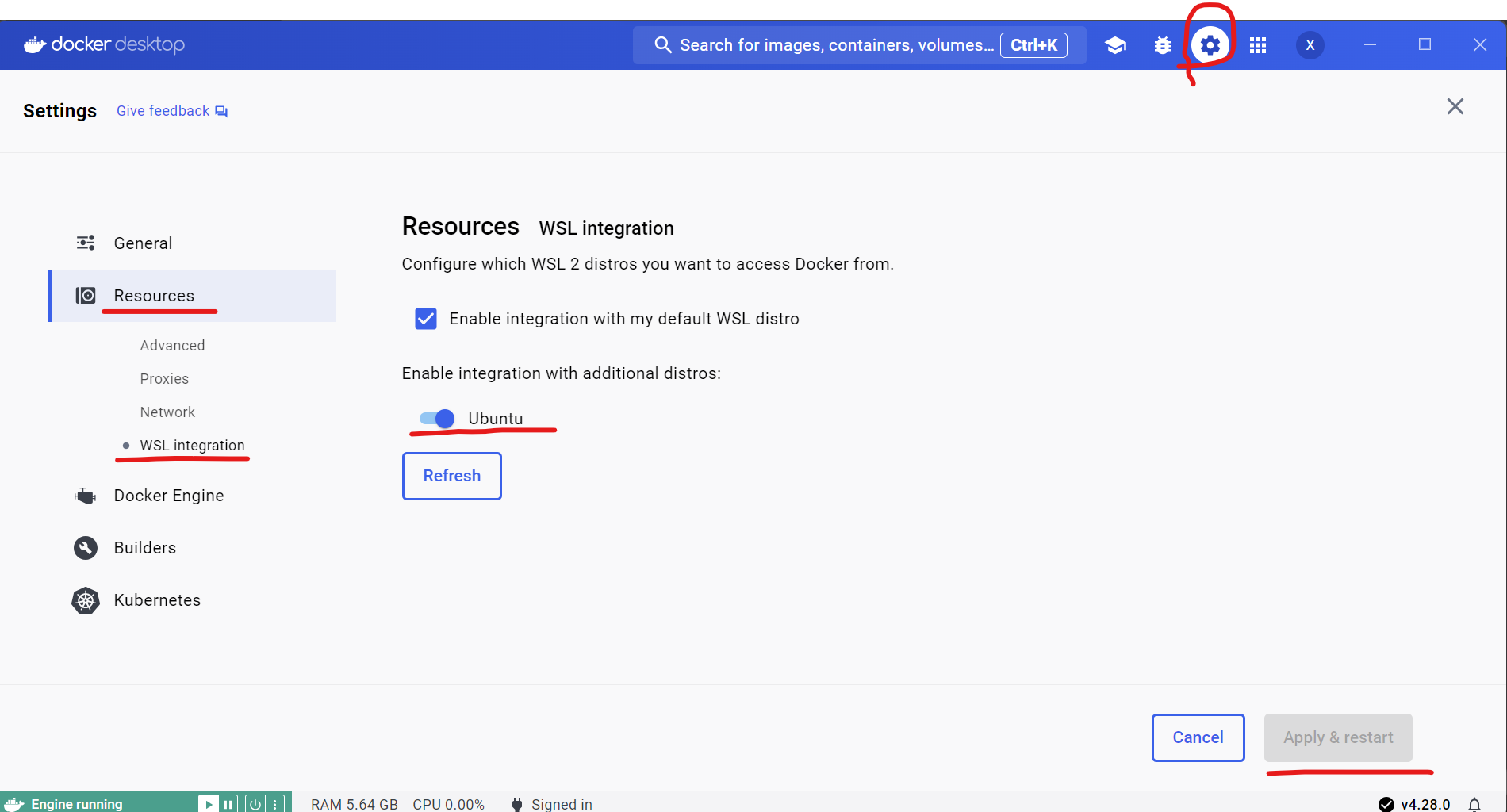

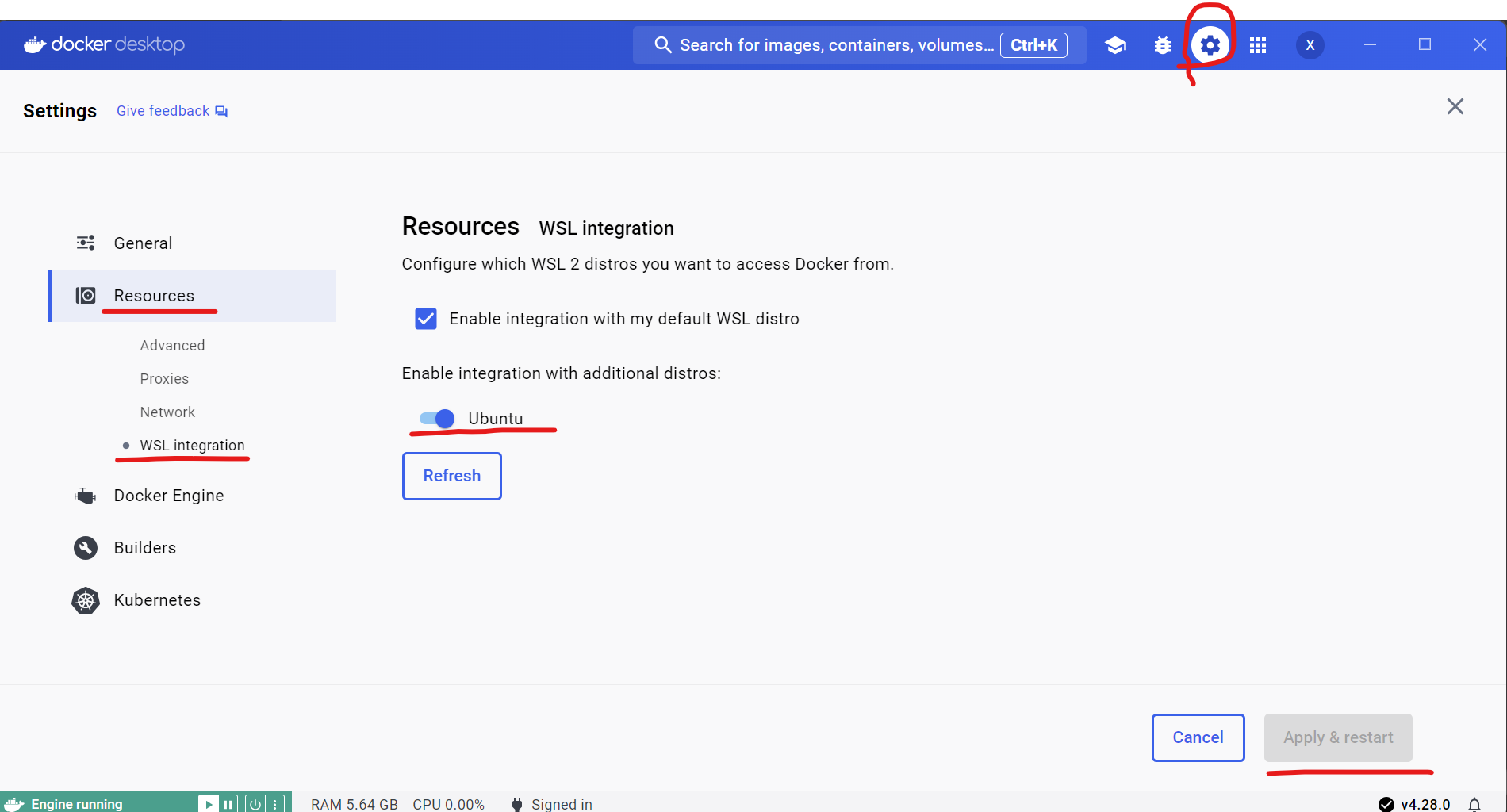

- cd For Windows WSL:

+- For **Windows WSL users**: ```bash #/bin/bash @@ -91,9 +88,7 @@ Start ipex-llm-xpu Docker Container. Choose one of the following commands to sta -v /usr/lib/wsl:/usr/lib/wsl \ $DOCKER_IMAGE ``` - -

+

+

-

+

+  +

> [!TIP]

diff --git a/docs/mddocs/Overview/KeyFeatures/inference_on_gpu.md b/docs/mddocs/Overview/KeyFeatures/inference_on_gpu.md

index 126dc2af..4ce0df60 100644

--- a/docs/mddocs/Overview/KeyFeatures/inference_on_gpu.md

+++ b/docs/mddocs/Overview/KeyFeatures/inference_on_gpu.md

@@ -30,7 +30,7 @@ You could choose to use [PyTorch API](./optimize_model.md) or [`transformers`-st

model = model.to('xpu') # Important after obtaining the optimized model

```

- > **Tip**"

+ > **Tip**:

>

> When running LLMs on Intel iGPUs for Windows users, we recommend setting `cpu_embedding=True` in the `optimize_model` function. This will allow the memory-intensive embedding layer to utilize the CPU instead of iGPU.

>

diff --git a/docs/mddocs/Quickstart/install_linux_gpu.md b/docs/mddocs/Quickstart/install_linux_gpu.md

index d4442b0b..afb64e6f 100644

--- a/docs/mddocs/Quickstart/install_linux_gpu.md

+++ b/docs/mddocs/Quickstart/install_linux_gpu.md

@@ -284,7 +284,9 @@ Now let's play with a real LLM. We'll be using the [phi-1.5](https://huggingface

print(output_str)

```

- > **Note**: When running LLMs on Intel iGPUs with limited memory size, we recommend setting `cpu_embedding=True` in the `from_pretrained` function.

+ > **Note**:

+ >

+ > When running LLMs on Intel iGPUs with limited memory size, we recommend setting `cpu_embedding=True` in the `from_pretrained` function.

> This will allow the memory-intensive embedding layer to utilize the CPU instead of GPU.

- Step 5. Run `demo.py` within the activated Python environment using the following command:

diff --git a/docs/mddocs/Quickstart/install_windows_gpu.md b/docs/mddocs/Quickstart/install_windows_gpu.md

index feddbed2..eb7fa9f9 100644

--- a/docs/mddocs/Quickstart/install_windows_gpu.md

+++ b/docs/mddocs/Quickstart/install_windows_gpu.md

@@ -102,7 +102,9 @@ You can verify if `ipex-llm` is successfully installed following below steps.

torch.Size([1, 1, 40, 40])

```

- > **Tip**: If you encounter any problem, please refer to [here](../Overview/install_gpu.md#troubleshooting) for help.

+ > **Tip**:

+ >

+ > If you encounter any problem, please refer to [here](../Overview/install_gpu.md#troubleshooting) for help.

- To exit the Python interactive shell, simply press Ctrl+Z then press Enter (or input `exit()` then press Enter).

@@ -239,7 +241,9 @@ Now let's play with a real LLM. We'll be using the [Qwen-1.8B-Chat](https://hugg

output_str = tokenizer.decode(output[0], skip_special_tokens=True)

print(output_str)

```

- > **Note**: Please note that the repo id on ModelScope may be different from Hugging Face for some models.

+ > **Note**:

+ >

+ > Please note that the repo id on ModelScope may be different from Hugging Face for some models.

> [!NOTE]

> When running LLMs on Intel iGPUs with limited memory size, we recommend setting `cpu_embedding=True` in the `from_pretrained` function.

diff --git a/docs/mddocs/Quickstart/llama_cpp_quickstart.md b/docs/mddocs/Quickstart/llama_cpp_quickstart.md

index 455d96f4..300b275e 100644

--- a/docs/mddocs/Quickstart/llama_cpp_quickstart.md

+++ b/docs/mddocs/Quickstart/llama_cpp_quickstart.md

@@ -135,7 +135,9 @@ Before running, you should download or copy community GGUF model to your current

./main -m mistral-7b-instruct-v0.1.Q4_K_M.gguf -n 32 --prompt "Once upon a time, there existed a little girl who liked to have adventures. She wanted to go to places and meet new people, and have fun" -t 8 -e -ngl 33 --color

```

- > **Note**: For more details about meaning of each parameter, you can use `./main -h`.

+ > **Note**:

+ >

+ > For more details about meaning of each parameter, you can use `./main -h`.

- For **Windows users**:

@@ -145,7 +147,9 @@ Before running, you should download or copy community GGUF model to your current

main -m mistral-7b-instruct-v0.1.Q4_K_M.gguf -n 32 --prompt "Once upon a time, there existed a little girl who liked to have adventures. She wanted to go to places and meet new people, and have fun" -t 8 -e -ngl 33 --color

```

- > **Note**: For more details about meaning of each parameter, you can use `main -h`.

+ > **Note**:

+ >

+ > For more details about meaning of each parameter, you can use `main -h`.

#### Sample Output

```

diff --git a/docs/mddocs/Quickstart/privateGPT_quickstart.md b/docs/mddocs/Quickstart/privateGPT_quickstart.md

index 2e2f18f4..c5fb068f 100644

--- a/docs/mddocs/Quickstart/privateGPT_quickstart.md

+++ b/docs/mddocs/Quickstart/privateGPT_quickstart.md

@@ -72,7 +72,9 @@ Run below commands to start the service in another terminal:

PGPT_PROFILES=ollama make run

```

- > **Note**: Setting `PGPT_PROFILES=ollama` will load the configuration from `settings.yaml` and `settings-ollama.yaml`.

+ > **Note**:

+ >

+ > Setting `PGPT_PROFILES=ollama` will load the configuration from `settings.yaml` and `settings-ollama.yaml`.

- For **Windows users**:

@@ -82,7 +84,9 @@ Run below commands to start the service in another terminal:

make run

```

- > **Note**: Setting `PGPT_PROFILES=ollama` will load the configuration from `settings.yaml` and `settings-ollama.yaml`.

+ > **Note**:

+ >

+ > Setting `PGPT_PROFILES=ollama` will load the configuration from `settings.yaml` and `settings-ollama.yaml`.

Upon successful deployment, you will see logs in the terminal similar to the following:

diff --git a/docs/mddocs/Quickstart/ragflow_quickstart.md b/docs/mddocs/Quickstart/ragflow_quickstart.md

index 161831d9..ec3f755b 100644

--- a/docs/mddocs/Quickstart/ragflow_quickstart.md

+++ b/docs/mddocs/Quickstart/ragflow_quickstart.md

@@ -5,8 +5,7 @@

*See the demo of ragflow running Qwen2:7B on Intel Arc A770 below.*

-

-

+[](https://llm-assets.readthedocs.io/en/latest/_images/ragflow-record.mp4)

## Quickstart

@@ -17,64 +16,47 @@

- Disk >= 50 GB

- Docker >= 24.0.0 & Docker Compose >= v2.26.1

-

### 1. Install and Start `Ollama` Service on Intel GPU

Follow the steps in [Run Ollama with IPEX-LLM on Intel GPU Guide](./ollama_quickstart.md) to install and run Ollama on Intel GPU. Ensure that `ollama serve` is running correctly and can be accessed through a local URL (e.g., `https://127.0.0.1:11434`) or a remote URL (e.g., `http://your_ip:11434`).

+> [!IMPORTANT]

+> If the `RAGFlow` is not deployed on the same machine where Ollama is running (which means `RAGFlow` needs to connect to a remote Ollama service), you must configure the Ollama service to accept connections from any IP address. To achieve this, set or export the environment variable `OLLAMA_HOST=0.0.0.0` before executing the command `ollama serve`.

-

-```eval_rst

-.. important::

-

- If the `RAGFlow` is not deployed on the same machine where Ollama is running (which means `RAGFlow` needs to connect to a remote Ollama service), you must configure the Ollama service to accept connections from any IP address. To achieve this, set or export the environment variable `OLLAMA_HOST=0.0.0.0` before executing the command `ollama serve`.

-

-.. tip::

-

- If your local LLM is running on Intel Arc™ A-Series Graphics with Linux OS (Kernel 6.2), it is recommended to additionaly set the following environment variable for optimal performance before executing `ollama serve`:

-

- .. code-block:: bash

-

- export SYCL_PI_LEVEL_ZERO_USE_IMMEDIATE_COMMANDLISTS=1

-```

+> [!TIP]

+> If your local LLM is running on Intel Arc™ A-Series Graphics with Linux OS (Kernel 6.2), it is recommended to additionaly set the following environment variable for optimal performance before executing `ollama serve`:

+>

+> ```bash

+> export SYCL_PI_LEVEL_ZERO_USE_IMMEDIATE_COMMANDLISTS=1

+> ```

### 2. Pull Model

Now we need to pull a model for RAG using Ollama. Here we use [Qwen/Qwen2-7B](https://huggingface.co/Qwen/Qwen2-7B) model as an example. Open a new terminal window, run the following command to pull [`qwen2:latest`](https://ollama.com/library/qwen2).

+- For **Linux users**:

-```eval_rst

-.. tabs::

- .. tab:: Linux

+ ```bash

+ export no_proxy=localhost,127.0.0.1

+ ./ollama pull qwen2:latest

+ ```

- .. code-block:: bash

+- For **Windows users**:

- export no_proxy=localhost,127.0.0.1

- ./ollama pull qwen2:latest

+ Please run the following command in Miniforge or Anaconda Prompt.

- .. tab:: Windows

+ ```cmd

+ set no_proxy=localhost,127.0.0.1

+ ollama pull qwen2:latest

+ ```

- Please run the following command in Miniforge or Anaconda Prompt.

-

- .. code-block:: cmd

-

- set no_proxy=localhost,127.0.0.1

- ollama pull qwen2:latest

-

-.. seealso::

-

- Besides Qwen2, there are other LLM models you might want to explore, such as Llama3, Phi3, Mistral, etc. You can find all available models in the `Ollama model library

+

> [!TIP]

diff --git a/docs/mddocs/Overview/KeyFeatures/inference_on_gpu.md b/docs/mddocs/Overview/KeyFeatures/inference_on_gpu.md

index 126dc2af..4ce0df60 100644

--- a/docs/mddocs/Overview/KeyFeatures/inference_on_gpu.md

+++ b/docs/mddocs/Overview/KeyFeatures/inference_on_gpu.md

@@ -30,7 +30,7 @@ You could choose to use [PyTorch API](./optimize_model.md) or [`transformers`-st

model = model.to('xpu') # Important after obtaining the optimized model

```

- > **Tip**"

+ > **Tip**:

>

> When running LLMs on Intel iGPUs for Windows users, we recommend setting `cpu_embedding=True` in the `optimize_model` function. This will allow the memory-intensive embedding layer to utilize the CPU instead of iGPU.

>

diff --git a/docs/mddocs/Quickstart/install_linux_gpu.md b/docs/mddocs/Quickstart/install_linux_gpu.md

index d4442b0b..afb64e6f 100644

--- a/docs/mddocs/Quickstart/install_linux_gpu.md

+++ b/docs/mddocs/Quickstart/install_linux_gpu.md

@@ -284,7 +284,9 @@ Now let's play with a real LLM. We'll be using the [phi-1.5](https://huggingface

print(output_str)

```

- > **Note**: When running LLMs on Intel iGPUs with limited memory size, we recommend setting `cpu_embedding=True` in the `from_pretrained` function.

+ > **Note**:

+ >

+ > When running LLMs on Intel iGPUs with limited memory size, we recommend setting `cpu_embedding=True` in the `from_pretrained` function.

> This will allow the memory-intensive embedding layer to utilize the CPU instead of GPU.

- Step 5. Run `demo.py` within the activated Python environment using the following command:

diff --git a/docs/mddocs/Quickstart/install_windows_gpu.md b/docs/mddocs/Quickstart/install_windows_gpu.md

index feddbed2..eb7fa9f9 100644

--- a/docs/mddocs/Quickstart/install_windows_gpu.md

+++ b/docs/mddocs/Quickstart/install_windows_gpu.md

@@ -102,7 +102,9 @@ You can verify if `ipex-llm` is successfully installed following below steps.

torch.Size([1, 1, 40, 40])

```

- > **Tip**: If you encounter any problem, please refer to [here](../Overview/install_gpu.md#troubleshooting) for help.

+ > **Tip**:

+ >

+ > If you encounter any problem, please refer to [here](../Overview/install_gpu.md#troubleshooting) for help.

- To exit the Python interactive shell, simply press Ctrl+Z then press Enter (or input `exit()` then press Enter).

@@ -239,7 +241,9 @@ Now let's play with a real LLM. We'll be using the [Qwen-1.8B-Chat](https://hugg

output_str = tokenizer.decode(output[0], skip_special_tokens=True)

print(output_str)

```

- > **Note**: Please note that the repo id on ModelScope may be different from Hugging Face for some models.

+ > **Note**:

+ >

+ > Please note that the repo id on ModelScope may be different from Hugging Face for some models.

> [!NOTE]

> When running LLMs on Intel iGPUs with limited memory size, we recommend setting `cpu_embedding=True` in the `from_pretrained` function.

diff --git a/docs/mddocs/Quickstart/llama_cpp_quickstart.md b/docs/mddocs/Quickstart/llama_cpp_quickstart.md

index 455d96f4..300b275e 100644

--- a/docs/mddocs/Quickstart/llama_cpp_quickstart.md

+++ b/docs/mddocs/Quickstart/llama_cpp_quickstart.md

@@ -135,7 +135,9 @@ Before running, you should download or copy community GGUF model to your current

./main -m mistral-7b-instruct-v0.1.Q4_K_M.gguf -n 32 --prompt "Once upon a time, there existed a little girl who liked to have adventures. She wanted to go to places and meet new people, and have fun" -t 8 -e -ngl 33 --color

```

- > **Note**: For more details about meaning of each parameter, you can use `./main -h`.

+ > **Note**:

+ >

+ > For more details about meaning of each parameter, you can use `./main -h`.

- For **Windows users**:

@@ -145,7 +147,9 @@ Before running, you should download or copy community GGUF model to your current

main -m mistral-7b-instruct-v0.1.Q4_K_M.gguf -n 32 --prompt "Once upon a time, there existed a little girl who liked to have adventures. She wanted to go to places and meet new people, and have fun" -t 8 -e -ngl 33 --color

```

- > **Note**: For more details about meaning of each parameter, you can use `main -h`.

+ > **Note**:

+ >

+ > For more details about meaning of each parameter, you can use `main -h`.

#### Sample Output

```

diff --git a/docs/mddocs/Quickstart/privateGPT_quickstart.md b/docs/mddocs/Quickstart/privateGPT_quickstart.md

index 2e2f18f4..c5fb068f 100644

--- a/docs/mddocs/Quickstart/privateGPT_quickstart.md

+++ b/docs/mddocs/Quickstart/privateGPT_quickstart.md

@@ -72,7 +72,9 @@ Run below commands to start the service in another terminal:

PGPT_PROFILES=ollama make run

```

- > **Note**: Setting `PGPT_PROFILES=ollama` will load the configuration from `settings.yaml` and `settings-ollama.yaml`.

+ > **Note**:

+ >

+ > Setting `PGPT_PROFILES=ollama` will load the configuration from `settings.yaml` and `settings-ollama.yaml`.

- For **Windows users**:

@@ -82,7 +84,9 @@ Run below commands to start the service in another terminal:

make run

```

- > **Note**: Setting `PGPT_PROFILES=ollama` will load the configuration from `settings.yaml` and `settings-ollama.yaml`.

+ > **Note**:

+ >

+ > Setting `PGPT_PROFILES=ollama` will load the configuration from `settings.yaml` and `settings-ollama.yaml`.

Upon successful deployment, you will see logs in the terminal similar to the following:

diff --git a/docs/mddocs/Quickstart/ragflow_quickstart.md b/docs/mddocs/Quickstart/ragflow_quickstart.md

index 161831d9..ec3f755b 100644

--- a/docs/mddocs/Quickstart/ragflow_quickstart.md

+++ b/docs/mddocs/Quickstart/ragflow_quickstart.md

@@ -5,8 +5,7 @@

*See the demo of ragflow running Qwen2:7B on Intel Arc A770 below.*

-

-

+[](https://llm-assets.readthedocs.io/en/latest/_images/ragflow-record.mp4)

## Quickstart

@@ -17,64 +16,47 @@

- Disk >= 50 GB

- Docker >= 24.0.0 & Docker Compose >= v2.26.1

-

### 1. Install and Start `Ollama` Service on Intel GPU

Follow the steps in [Run Ollama with IPEX-LLM on Intel GPU Guide](./ollama_quickstart.md) to install and run Ollama on Intel GPU. Ensure that `ollama serve` is running correctly and can be accessed through a local URL (e.g., `https://127.0.0.1:11434`) or a remote URL (e.g., `http://your_ip:11434`).

+> [!IMPORTANT]

+> If the `RAGFlow` is not deployed on the same machine where Ollama is running (which means `RAGFlow` needs to connect to a remote Ollama service), you must configure the Ollama service to accept connections from any IP address. To achieve this, set or export the environment variable `OLLAMA_HOST=0.0.0.0` before executing the command `ollama serve`.

-

-```eval_rst

-.. important::

-

- If the `RAGFlow` is not deployed on the same machine where Ollama is running (which means `RAGFlow` needs to connect to a remote Ollama service), you must configure the Ollama service to accept connections from any IP address. To achieve this, set or export the environment variable `OLLAMA_HOST=0.0.0.0` before executing the command `ollama serve`.

-

-.. tip::

-

- If your local LLM is running on Intel Arc™ A-Series Graphics with Linux OS (Kernel 6.2), it is recommended to additionaly set the following environment variable for optimal performance before executing `ollama serve`:

-

- .. code-block:: bash

-

- export SYCL_PI_LEVEL_ZERO_USE_IMMEDIATE_COMMANDLISTS=1

-```

+> [!TIP]

+> If your local LLM is running on Intel Arc™ A-Series Graphics with Linux OS (Kernel 6.2), it is recommended to additionaly set the following environment variable for optimal performance before executing `ollama serve`:

+>

+> ```bash

+> export SYCL_PI_LEVEL_ZERO_USE_IMMEDIATE_COMMANDLISTS=1

+> ```

### 2. Pull Model

Now we need to pull a model for RAG using Ollama. Here we use [Qwen/Qwen2-7B](https://huggingface.co/Qwen/Qwen2-7B) model as an example. Open a new terminal window, run the following command to pull [`qwen2:latest`](https://ollama.com/library/qwen2).

+- For **Linux users**:

-```eval_rst

-.. tabs::

- .. tab:: Linux

+ ```bash

+ export no_proxy=localhost,127.0.0.1

+ ./ollama pull qwen2:latest

+ ```

- .. code-block:: bash

+- For **Windows users**:

- export no_proxy=localhost,127.0.0.1

- ./ollama pull qwen2:latest

+ Please run the following command in Miniforge or Anaconda Prompt.

- .. tab:: Windows

+ ```cmd

+ set no_proxy=localhost,127.0.0.1

+ ollama pull qwen2:latest

+ ```

- Please run the following command in Miniforge or Anaconda Prompt.

-

- .. code-block:: cmd

-

- set no_proxy=localhost,127.0.0.1

- ollama pull qwen2:latest

-

-.. seealso::

-

- Besides Qwen2, there are other LLM models you might want to explore, such as Llama3, Phi3, Mistral, etc. You can find all available models in the `Ollama model library  -```eval_rst

-.. note::

-

- If you want to use an Ollama server hosted at a different URL, simply update the **Ollama Base URL** to the new URL and press the **OK** button again to re-confirm the connection to Ollama.

-```

+> [!NOTE]

+> If you want to use an Ollama server hosted at a different URL, simply update the **Ollama Base URL** to the new URL and press the **OK** button again to re-confirm the connection to Ollama.

#### Create Knowledge Base

@@ -248,24 +214,19 @@ Start new conversations by clicking **Chat** in the top navbar.

On the left side, create a conversation by clicking **Create an Assistant**. Under **Assistant Setting**, give it a name and select your knowledge bases.

-

-

-```eval_rst

-.. note::

-

- If you want to use an Ollama server hosted at a different URL, simply update the **Ollama Base URL** to the new URL and press the **OK** button again to re-confirm the connection to Ollama.

-```

+> [!NOTE]

+> If you want to use an Ollama server hosted at a different URL, simply update the **Ollama Base URL** to the new URL and press the **OK** button again to re-confirm the connection to Ollama.

#### Create Knowledge Base

@@ -248,24 +214,19 @@ Start new conversations by clicking **Chat** in the top navbar.

On the left side, create a conversation by clicking **Create an Assistant**. Under **Assistant Setting**, give it a name and select your knowledge bases.

-

-  -

+

+

-

+

+  +

Next, go to **Model Setting**, choose your model added by Ollama, and disable the **Max Tokens** toggle. Finally, click **OK** to start.

-```eval_rst

-.. tip::

+> [!TIP]

+> Enabling the **Max Tokens** toggle may result in very short answers.

- Enabling the **Max Tokens** toggle may result in very short answers.

-```

-

-

-

+

Next, go to **Model Setting**, choose your model added by Ollama, and disable the **Max Tokens** toggle. Finally, click **OK** to start.

-```eval_rst

-.. tip::

+> [!TIP]

+> Enabling the **Max Tokens** toggle may result in very short answers.

- Enabling the **Max Tokens** toggle may result in very short answers.

-```

-

-

-  -

-

-

-

-

-+ +

+

Input your questions into the **Message Resume Assistant** textbox at the bottom, and click the button on the right to get responses.

+

Input your questions into the **Message Resume Assistant** textbox at the bottom, and click the button on the right to get responses.