diff --git a/docs/readthedocs/source/doc/LLM/Quickstart/llama_cpp_quickstart.md b/docs/readthedocs/source/doc/LLM/Quickstart/llama_cpp_quickstart.md

index 4736b6dc..e3e9840b 100644

--- a/docs/readthedocs/source/doc/LLM/Quickstart/llama_cpp_quickstart.md

+++ b/docs/readthedocs/source/doc/LLM/Quickstart/llama_cpp_quickstart.md

@@ -15,7 +15,7 @@ IPEX-LLM's support for `llama.cpp` now is avaliable for Linux system and Windows

#### Linux

For Linux system, we recommend Ubuntu 20.04 or later (Ubuntu 22.04 is preferred).

-Visit the [Install IPEX-LLM on Linux with Intel GPU](https://ipex-llm.readthedocs.io/en/latest/doc/LLM/Quickstart/install_linux_gpu.html), follow [Install Intel GPU Driver](https://ipex-llm.readthedocs.io/en/latest/doc/LLM/Quickstart/install_linux_gpu.html#install-intel-gpu-driver) and [Install oneAPI](https://ipex-llm.readthedocs.io/en/latest/doc/LLM/Quickstart/install_linux_gpu.html#install-oneapi) to install GPU driver and [Intel® oneAPI Base Toolkit 2024.0](https://www.intel.com/content/www/us/en/developer/tools/oneapi/base-toolkit-download.html).

+Visit the [Install IPEX-LLM on Linux with Intel GPU](https://ipex-llm.readthedocs.io/en/latest/doc/LLM/Quickstart/install_linux_gpu.html), follow [Install Intel GPU Driver](https://ipex-llm.readthedocs.io/en/latest/doc/LLM/Quickstart/install_linux_gpu.html#install-intel-gpu-driver) and [Install oneAPI](https://ipex-llm.readthedocs.io/en/latest/doc/LLM/Quickstart/install_linux_gpu.html#install-oneapi) to install GPU driver and Intel® oneAPI Base Toolkit 2024.0.

#### Windows

Visit the [Install IPEX-LLM on Windows with Intel GPU Guide](https://ipex-llm.readthedocs.io/en/latest/doc/LLM/Quickstart/install_windows_gpu.html), and follow [Install Prerequisites](https://ipex-llm.readthedocs.io/en/latest/doc/LLM/Quickstart/install_windows_gpu.html#install-prerequisites) to install [Visual Studio 2022](https://visualstudio.microsoft.com/downloads/) Community Edition, latest [GPU driver](https://www.intel.com/content/www/us/en/download/785597/intel-arc-iris-xe-graphics-windows.html) and Intel® oneAPI Base Toolkit 2024.0.

diff --git a/docs/readthedocs/source/doc/LLM/Quickstart/ollama_quickstart.md b/docs/readthedocs/source/doc/LLM/Quickstart/ollama_quickstart.md

index 998dd2d8..f2bdbca2 100644

--- a/docs/readthedocs/source/doc/LLM/Quickstart/ollama_quickstart.md

+++ b/docs/readthedocs/source/doc/LLM/Quickstart/ollama_quickstart.md

@@ -1,51 +1,81 @@

-# Run Ollama on Linux with Intel GPU

+# Run Ollama with IPEX-LLM on Intel GPU

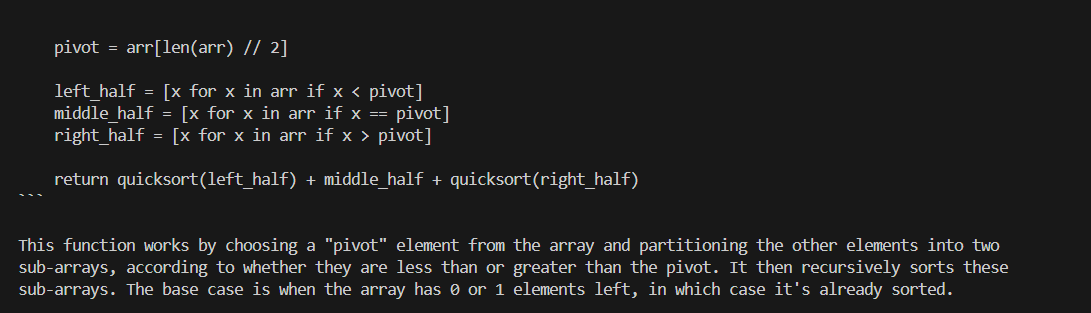

[ollama/ollama](https://github.com/ollama/ollama) is popular framework designed to build and run language models on a local machine; you can now use the C++ interface of [`ipex-llm`](https://github.com/intel-analytics/ipex-llm) as an accelerated backend for `ollama` running on Intel **GPU** *(e.g., local PC with iGPU, discrete GPU such as Arc, Flex and Max)*.

-```eval_rst

-.. note::

- Only Linux is currently supported.

-```

-

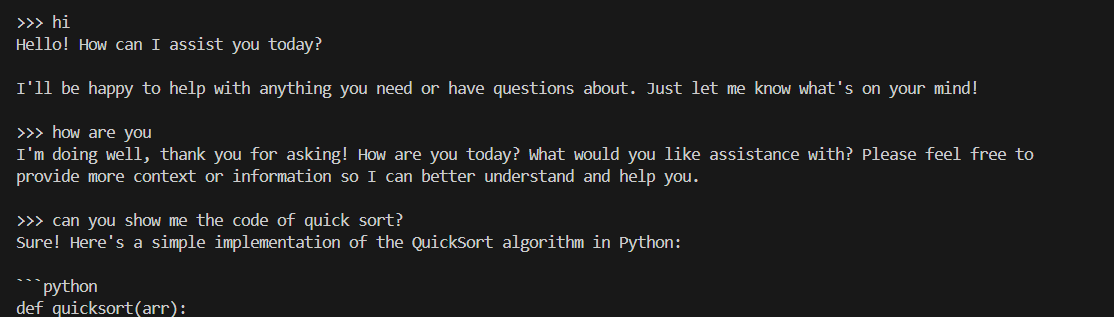

See the demo of running LLaMA2-7B on Intel Arc GPU below.

## Quickstart

-### 1 Install IPEX-LLM with Ollama Binaries

+### 1 Install IPEX-LLM for Ollama

-Visit [Run llama.cpp with IPEX-LLM on Intel GPU Guide](https://ipex-llm.readthedocs.io/en/latest/doc/LLM/Quickstart/llama_cpp_quickstart.html), and follow the instructions in section [Install Prerequisits on Linux](https://ipex-llm.readthedocs.io/en/latest/doc/LLM/Quickstart/llama_cpp_quickstart.html#linux) , and section [Install IPEX-LLM cpp](https://ipex-llm.readthedocs.io/en/latest/doc/LLM/Quickstart/llama_cpp_quickstart.html#install-ipex-llm-for-llama-cpp) to install the IPEX-LLM with Ollama binaries.

+IPEX-LLM's support for `ollama` now is avaliable for Linux system and Windows system.

-**After the installation, you should have created a conda environment, named `llm-cpp` for instance, for running `llama.cpp` commands with IPEX-LLM.**

+Visit [Run llama.cpp with IPEX-LLM on Intel GPU Guide](https://ipex-llm.readthedocs.io/en/latest/doc/LLM/Quickstart/llama_cpp_quickstart.html), and follow the instructions in section [Prerequisites](https://ipex-llm.readthedocs.io/en/latest/doc/LLM/Quickstart/llama_cpp_quickstart.html#prerequisites) to setup and section [Install IPEX-LLM cpp](https://ipex-llm.readthedocs.io/en/latest/doc/LLM/Quickstart/llama_cpp_quickstart.html#install-ipex-llm-for-llama-cpp) to install the IPEX-LLM with Ollama binaries.

+

+**After the installation, you should have created a conda environment, named `llm-cpp` for instance, for running `ollama` commands with IPEX-LLM.**

### 2. Initialize Ollama

Activate the `llm-cpp` conda environment and initialize Ollama by executing the commands below. A symbolic link to `ollama` will appear in your current directory.

-```bash

-conda activate llm-cpp

-init-ollama

-```

+```eval_rst

+.. tabs::

+ .. tab:: Linux

+

+ .. code-block:: bash

+

+ conda activate llm-cpp

+ init-ollama

+

+ .. tab:: Windows

+

+ Please run the following command with **administrator privilege in Anaconda Prompt**.

+

+ .. code-block:: bash

+

+ conda activate llm-cpp

+ init-ollama.bat

+

+```

+

+**Now you can use this executable file by standard ollama's usage.**

### 3 Run Ollama Serve

Launch the Ollama service:

-```bash

-conda activate llm-cpp

+```eval_rst

+.. tabs::

+ .. tab:: Linux

-export no_proxy=localhost,127.0.0.1

-export ZES_ENABLE_SYSMAN=1

-source /opt/intel/oneapi/setvars.sh

+ .. code-block:: bash

+

+ export no_proxy=localhost,127.0.0.1

+ export ZES_ENABLE_SYSMAN=1

+ source /opt/intel/oneapi/setvars.sh

+

+ ./ollama serve

+

+ .. tab:: Windows

+

+ Please run the following command in Anaconda Prompt.

+

+ .. code-block:: bash

+

+ set no_proxy=localhost,127.0.0.1

+ set ZES_ENABLE_SYSMAN=1

+ call "C:\Program Files (x86)\Intel\oneAPI\setvars.bat"

+

+ ollama.exe serve

-./ollama serve

```

```eval_rst

.. note::

-

+

To allow the service to accept connections from all IP addresses, use `OLLAMA_HOST=0.0.0.0 ./ollama serve` instead of just `./ollama serve`.

```

@@ -56,55 +86,101 @@ The console will display messages similar to the following:

-

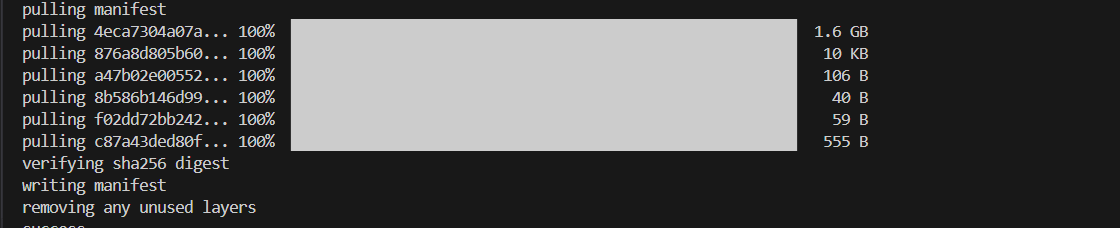

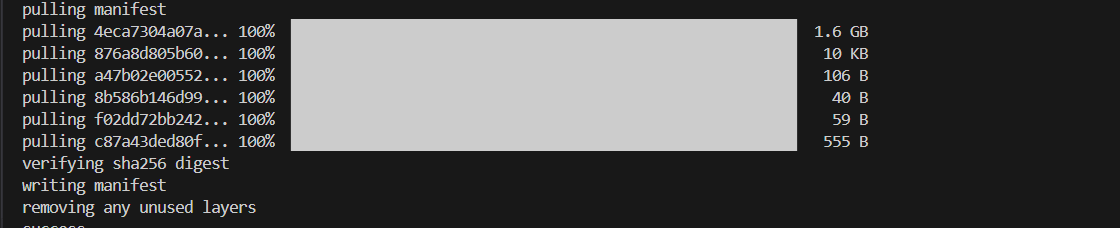

### 4 Pull Model

-Keep the Ollama service on and open another terminal and run `./ollama pull ` to automatically pull a model. e.g. `dolphin-phi:latest`:

+Keep the Ollama service on and open another terminal and run `./ollama pull ` in Linux (`ollama.exe pull ` in Windows) to automatically pull a model. e.g. `dolphin-phi:latest`:

-

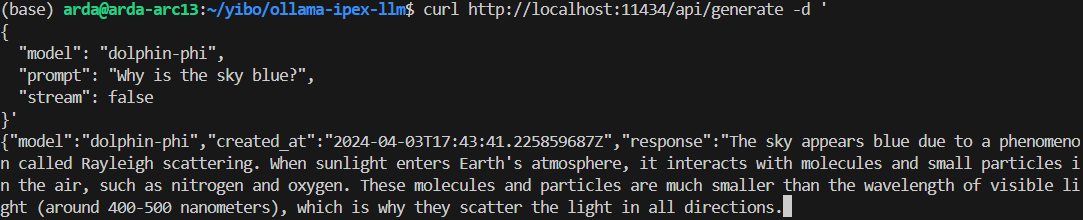

### 5 Using Ollama

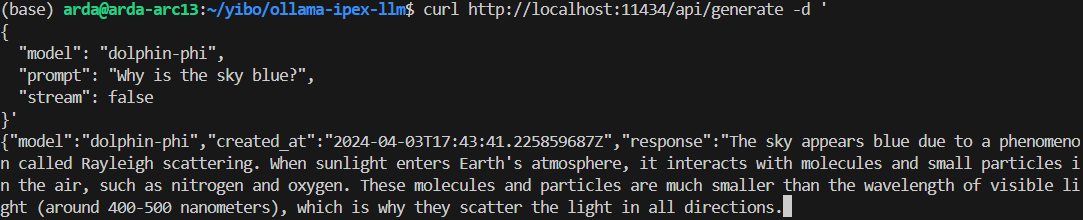

#### Using Curl

-Using `curl` is the easiest way to verify the API service and model. Execute the following commands in a terminal. **Replace the with your pulled model**, e.g. `dolphin-phi`.

+Using `curl` is the easiest way to verify the API service and model. Execute the following commands in a terminal. **Replace the with your pulled

+model**, e.g. `dolphin-phi`.

+

+```eval_rst

+.. tabs::

+ .. tab:: Linux

+

+ .. code-block:: bash

+

+ curl http://localhost:11434/api/generate -d '

+ {

+ "model": "",

+ "prompt": "Why is the sky blue?",

+ "stream": false,

+ "options":{"num_gpu": 999}

+ }'

+

+ .. tab:: Windows

+

+ Please run the following command in Anaconda Prompt.

+

+ .. code-block:: bash

+

+ curl http://localhost:11434/api/generate -d "

+ {

+ \"model\": \"\",

+ \"prompt\": \"Why is the sky blue?\",

+ \"stream\": false,

+ \"options\":{\"num_gpu\": 999}

+ }"

-```shell

-curl http://localhost:11434/api/generate -d '

-{

- "model": "",

- "prompt": "Why is the sky blue?",

- "stream": false

-}'

```

-An example output of using model `doplphin-phi` looks like the following:

-

-

-

### 5 Using Ollama

#### Using Curl

-Using `curl` is the easiest way to verify the API service and model. Execute the following commands in a terminal. **Replace the with your pulled model**, e.g. `dolphin-phi`.

+Using `curl` is the easiest way to verify the API service and model. Execute the following commands in a terminal. **Replace the with your pulled

+model**, e.g. `dolphin-phi`.

+

+```eval_rst

+.. tabs::

+ .. tab:: Linux

+

+ .. code-block:: bash

+

+ curl http://localhost:11434/api/generate -d '

+ {

+ "model": "",

+ "prompt": "Why is the sky blue?",

+ "stream": false,

+ "options":{"num_gpu": 999}

+ }'

+

+ .. tab:: Windows

+

+ Please run the following command in Anaconda Prompt.

+

+ .. code-block:: bash

+

+ curl http://localhost:11434/api/generate -d "

+ {

+ \"model\": \"\",

+ \"prompt\": \"Why is the sky blue?\",

+ \"stream\": false,

+ \"options\":{\"num_gpu\": 999}

+ }"

-```shell

-curl http://localhost:11434/api/generate -d '

-{

- "model": "",

- "prompt": "Why is the sky blue?",

- "stream": false

-}'

```

-An example output of using model `doplphin-phi` looks like the following:

-

-  -

+```eval_rst

+.. note::

+ Please don't forget to set ``"options":{"num_gpu": 999}`` to make sure all layers of your model are running on Intel GPU, otherwise, some layers may run on CPU.

+```

-#### Using Ollama Run

-

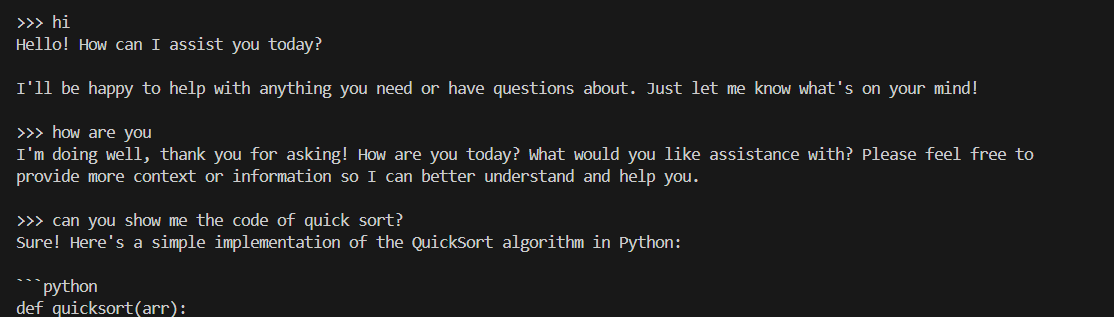

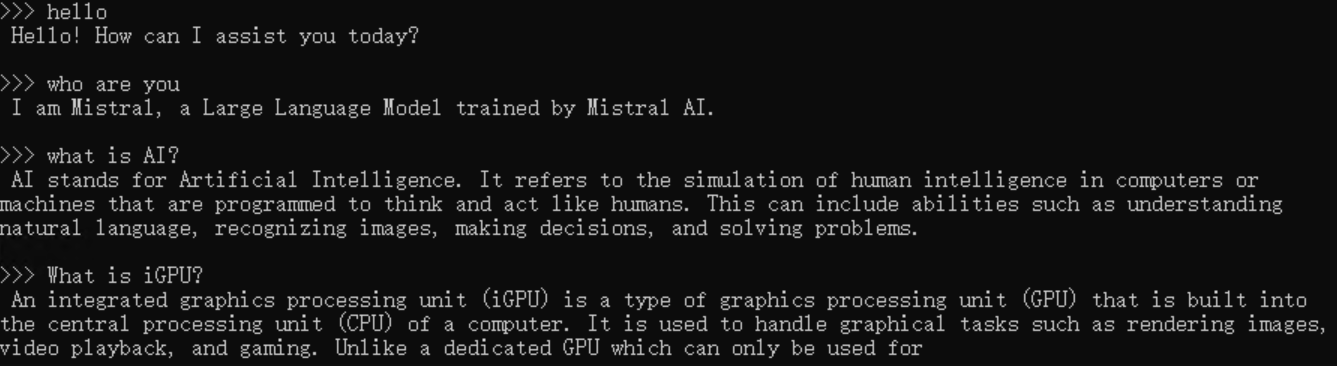

-You can also use `ollama run` to run the model directly on console. **Replace the with your pulled model**, e.g. `dolphin-phi`. This command will seamlessly download, load the model, and enable you to interact with it through a streaming conversation."

+#### Using Ollama Run GGUF models

+Ollama supports importing GGUF models in the Modelfile, for example, suppose you have downloaded a `mistral-7b-instruct-v0.1.Q4_K_M.gguf` from [Mistral-7B-Instruct-v0.1-GGUF](https://huggingface.co/TheBloke/Mistral-7B-Instruct-v0.1-GGUF/tree/main), then you can create a file named `Modelfile`:

```bash

-conda activate llm-cpp

-

-export no_proxy=localhost,127.0.0.1

-export ZES_ENABLE_SYSMAN=1

-source /opt/intel/oneapi/setvars.sh

-

-./ollama run

+FROM ./mistral-7b-instruct-v0.1.Q4_K_M.gguf

+TEMPLATE [INST] {{ .Prompt }} [/INST]

+PARAMETER num_gpu 999

+PARAMETER num_predict 64

```

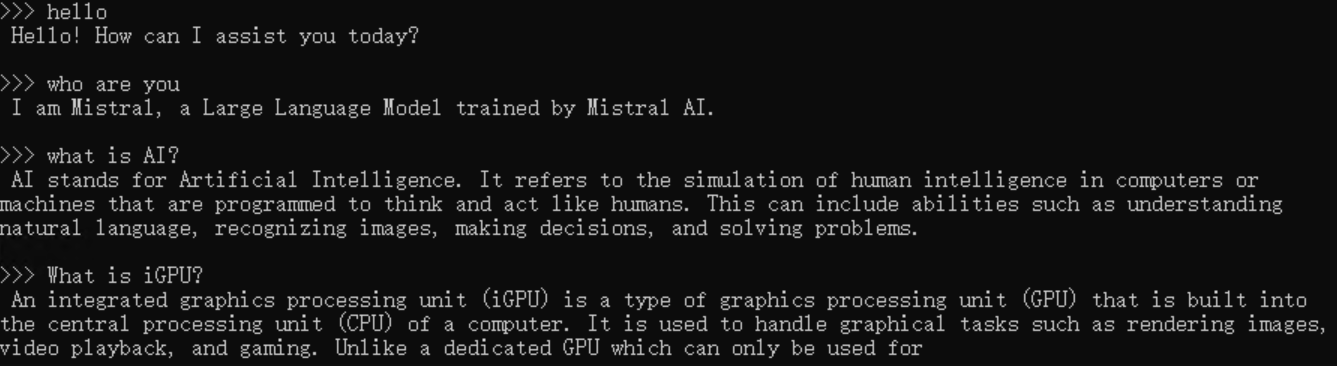

-An example process of interacting with model with `ollama run` looks like the following:

+```eval_rst

+.. note::

-

-

-

+```eval_rst

+.. note::

+ Please don't forget to set ``"options":{"num_gpu": 999}`` to make sure all layers of your model are running on Intel GPU, otherwise, some layers may run on CPU.

+```

-#### Using Ollama Run

-

-You can also use `ollama run` to run the model directly on console. **Replace the with your pulled model**, e.g. `dolphin-phi`. This command will seamlessly download, load the model, and enable you to interact with it through a streaming conversation."

+#### Using Ollama Run GGUF models

+Ollama supports importing GGUF models in the Modelfile, for example, suppose you have downloaded a `mistral-7b-instruct-v0.1.Q4_K_M.gguf` from [Mistral-7B-Instruct-v0.1-GGUF](https://huggingface.co/TheBloke/Mistral-7B-Instruct-v0.1-GGUF/tree/main), then you can create a file named `Modelfile`:

```bash

-conda activate llm-cpp

-

-export no_proxy=localhost,127.0.0.1

-export ZES_ENABLE_SYSMAN=1

-source /opt/intel/oneapi/setvars.sh

-

-./ollama run

+FROM ./mistral-7b-instruct-v0.1.Q4_K_M.gguf

+TEMPLATE [INST] {{ .Prompt }} [/INST]

+PARAMETER num_gpu 999

+PARAMETER num_predict 64

```

-An example process of interacting with model with `ollama run` looks like the following:

+```eval_rst

+.. note::

-

-

+ Please don't forget to set ``PARAMETER num_gpu 999`` to make sure all layers of your model are running on Intel GPU, otherwise, some layers may run on CPU.

+```

+

+Then you can create the model in Ollama by `ollama create example -f Modelfile` and use `ollama run` to run the model directly on console.

+

+```eval_rst

+.. tabs::

+ .. tab:: Linux

+

+ .. code-block:: bash

+

+ export no_proxy=localhost,127.0.0.1

+ ./ollama create example -f Modelfile

+ ./ollama run example

+

+ .. tab:: Windows

+

+ Please run the following command in Anaconda Prompt.

+

+ .. code-block:: bash

+

+ set no_proxy=localhost,127.0.0.1

+ ollama.exe create example -f Modelfile

+ ollama.exe run example

+

+```

+

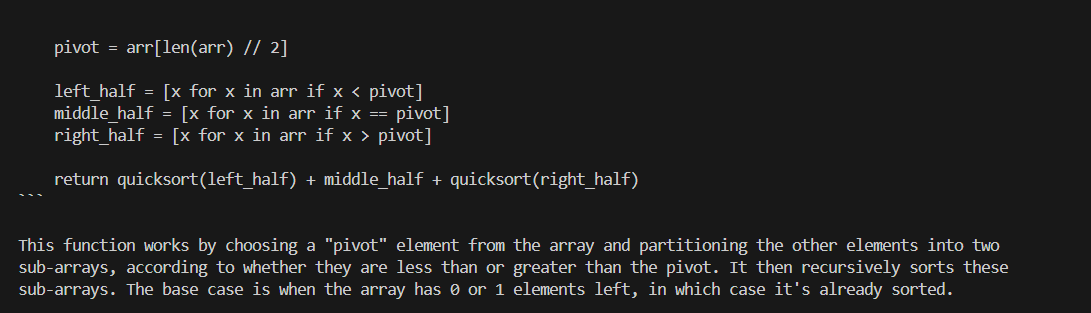

+An example process of interacting with model with `ollama run example` looks like the following:

+

+

+

+ Please don't forget to set ``PARAMETER num_gpu 999`` to make sure all layers of your model are running on Intel GPU, otherwise, some layers may run on CPU.

+```

+

+Then you can create the model in Ollama by `ollama create example -f Modelfile` and use `ollama run` to run the model directly on console.

+

+```eval_rst

+.. tabs::

+ .. tab:: Linux

+

+ .. code-block:: bash

+

+ export no_proxy=localhost,127.0.0.1

+ ./ollama create example -f Modelfile

+ ./ollama run example

+

+ .. tab:: Windows

+

+ Please run the following command in Anaconda Prompt.

+

+ .. code-block:: bash

+

+ set no_proxy=localhost,127.0.0.1

+ ollama.exe create example -f Modelfile

+ ollama.exe run example

+

+```

+

+An example process of interacting with model with `ollama run example` looks like the following:

+

+

+

-

### 5 Using Ollama

#### Using Curl

-Using `curl` is the easiest way to verify the API service and model. Execute the following commands in a terminal. **Replace the

-

### 5 Using Ollama

#### Using Curl

-Using `curl` is the easiest way to verify the API service and model. Execute the following commands in a terminal. **Replace the  -

+```eval_rst

+.. note::

+ Please don't forget to set ``"options":{"num_gpu": 999}`` to make sure all layers of your model are running on Intel GPU, otherwise, some layers may run on CPU.

+```

-#### Using Ollama Run

-

-You can also use `ollama run` to run the model directly on console. **Replace the

-

+```eval_rst

+.. note::

+ Please don't forget to set ``"options":{"num_gpu": 999}`` to make sure all layers of your model are running on Intel GPU, otherwise, some layers may run on CPU.

+```

-#### Using Ollama Run

-

-You can also use `ollama run` to run the model directly on console. **Replace the

+ Please don't forget to set ``PARAMETER num_gpu 999`` to make sure all layers of your model are running on Intel GPU, otherwise, some layers may run on CPU.

+```

+

+Then you can create the model in Ollama by `ollama create example -f Modelfile` and use `ollama run` to run the model directly on console.

+

+```eval_rst

+.. tabs::

+ .. tab:: Linux

+

+ .. code-block:: bash

+

+ export no_proxy=localhost,127.0.0.1

+ ./ollama create example -f Modelfile

+ ./ollama run example

+

+ .. tab:: Windows

+

+ Please run the following command in Anaconda Prompt.

+

+ .. code-block:: bash

+

+ set no_proxy=localhost,127.0.0.1

+ ollama.exe create example -f Modelfile

+ ollama.exe run example

+

+```

+

+An example process of interacting with model with `ollama run example` looks like the following:

+

+

+

+ Please don't forget to set ``PARAMETER num_gpu 999`` to make sure all layers of your model are running on Intel GPU, otherwise, some layers may run on CPU.

+```

+

+Then you can create the model in Ollama by `ollama create example -f Modelfile` and use `ollama run` to run the model directly on console.

+

+```eval_rst

+.. tabs::

+ .. tab:: Linux

+

+ .. code-block:: bash

+

+ export no_proxy=localhost,127.0.0.1

+ ./ollama create example -f Modelfile

+ ./ollama run example

+

+ .. tab:: Windows

+

+ Please run the following command in Anaconda Prompt.

+

+ .. code-block:: bash

+

+ set no_proxy=localhost,127.0.0.1

+ ollama.exe create example -f Modelfile

+ ollama.exe run example

+

+```

+

+An example process of interacting with model with `ollama run example` looks like the following:

+

+

+