diff --git a/docs/readthedocs/source/_templates/sidebar_quicklinks.html b/docs/readthedocs/source/_templates/sidebar_quicklinks.html

index 8bf983bc..d1714000 100644

--- a/docs/readthedocs/source/_templates/sidebar_quicklinks.html

+++ b/docs/readthedocs/source/_templates/sidebar_quicklinks.html

@@ -29,11 +29,14 @@

Install IPEX-LLM in Docker on Windows with Intel GPU

- Run Code Copilot (Continue) in VSCode with Intel GPU

+ Run Langchain-Chatchat (RAG Application) on Intel GPU

Run Text Generation WebUI on Intel GPU

+

+ Run Code Copilot (Continue) in VSCode with Intel GPU

+

Run Performance Benchmarking with IPEX-LLM

diff --git a/docs/readthedocs/source/_toc.yml b/docs/readthedocs/source/_toc.yml

index 6ef02e87..97f63a09 100644

--- a/docs/readthedocs/source/_toc.yml

+++ b/docs/readthedocs/source/_toc.yml

@@ -23,6 +23,7 @@ subtrees:

- file: doc/LLM/Quickstart/install_linux_gpu

- file: doc/LLM/Quickstart/install_windows_gpu

- file: doc/LLM/Quickstart/docker_windows_gpu

+ - file: doc/LLM/Quickstart/chatchat_quickstart

- file: doc/LLM/Quickstart/webui_quickstart

- file: doc/LLM/Quickstart/continue_quickstart

- file: doc/LLM/Quickstart/benchmark_quickstart

diff --git a/docs/readthedocs/source/doc/LLM/Quickstart/chatchat_quickstart.md b/docs/readthedocs/source/doc/LLM/Quickstart/chatchat_quickstart.md

new file mode 100644

index 00000000..94edc7eb

--- /dev/null

+++ b/docs/readthedocs/source/doc/LLM/Quickstart/chatchat_quickstart.md

@@ -0,0 +1,74 @@

+# Run Langchain-Chatchat on Intel GPU

+

+[chatchat-space/Langchain-Chatchat](https://github.com/chatchat-space/Langchain-Chatchat) is a Knowledge Base QA application using RAG pipeline; by porting it to [`ipex-llm`](https://github.com/intel-analytics/ipex-llm), users can now easily use [Langchain-Chatchat](https://github.com/intel-analytics/Langchain-Chatchat) with LLMs and Embedding models running locally on Intel GPU (e.g., local PC with iGPU, discrete GPU such as Arc, Flex and Max); see the demos of running LLaMA2-7B (English) and ChatGLM-3-6B (Chinese) on an Intel Core Ultra laptop below.

+

+

+

+ | English |

+ 简体中文 |

+

+

+ |

+ |

+

+

+

+

+

+>You can change the UI language in the left-side menu. We currently support **English** and **简体中文** (see video demos below).

+

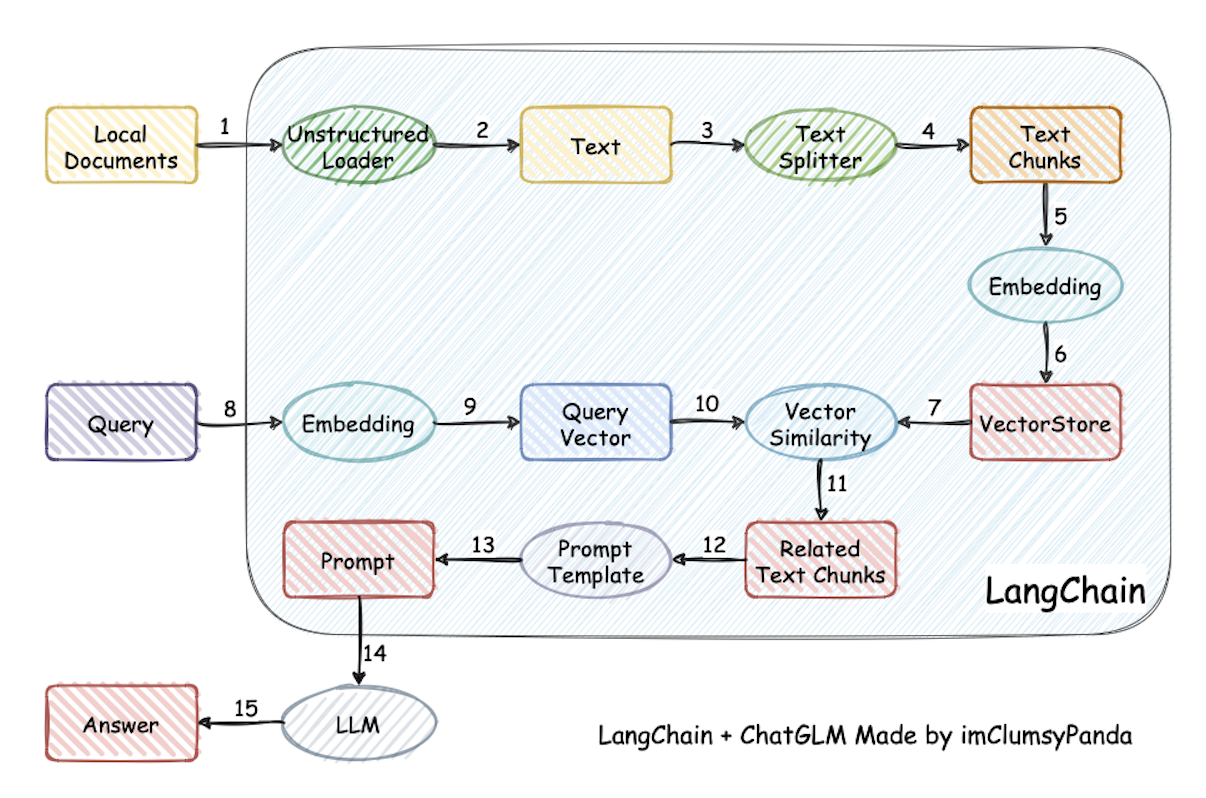

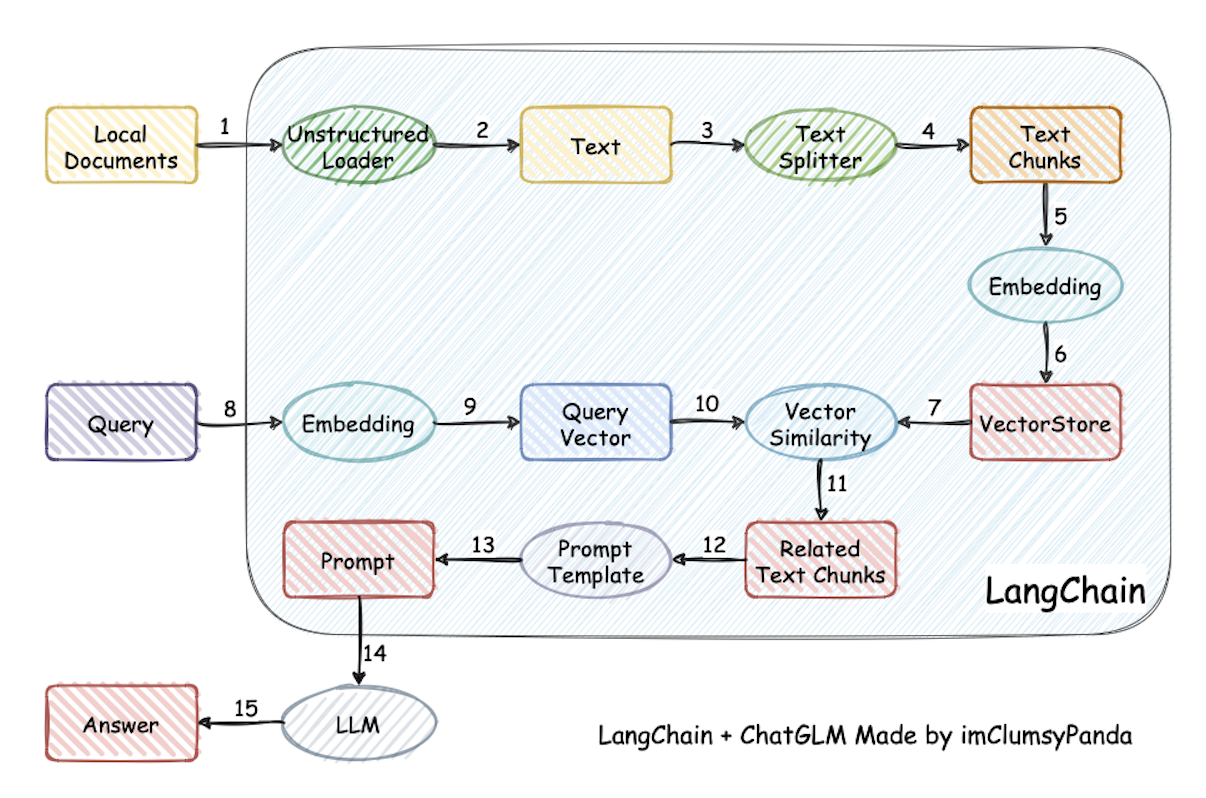

+## Langchain-Chatchat Architecture

+

+See the Langchain-Chatchat architecture below ([source](https://github.com/chatchat-space/Langchain-Chatchat/blob/master/img/langchain%2Bchatglm.png)).

+

+ +

+## Quickstart

+

+

+

+### Install and Run

+

+ Follow the guide that corresponds to your specific system and GPU type from the links provided below:

+

+- For systems with Intel Core Ultra integrated GPU: [Windows Guide](./INSTALL_win_mtl.md)

+- For systems with Intel Arc A-Series GPU: [Windows Guide](./INSTALL_windows_arc.md) | [Linux Guide](./INSTALL_linux_arc.md)

+- For systems with Intel Data Center Max Series GPU: [Linux Guide](./INSTALL_linux_max.md)

+

+

+### How to use RAG

+

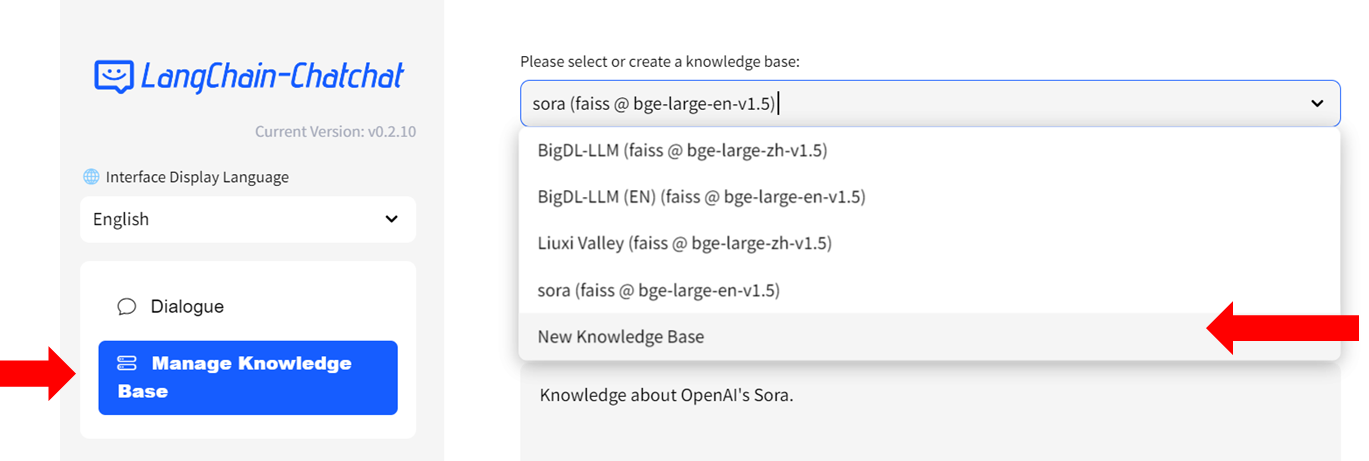

+#### Step 1: Create Knowledge Base

+

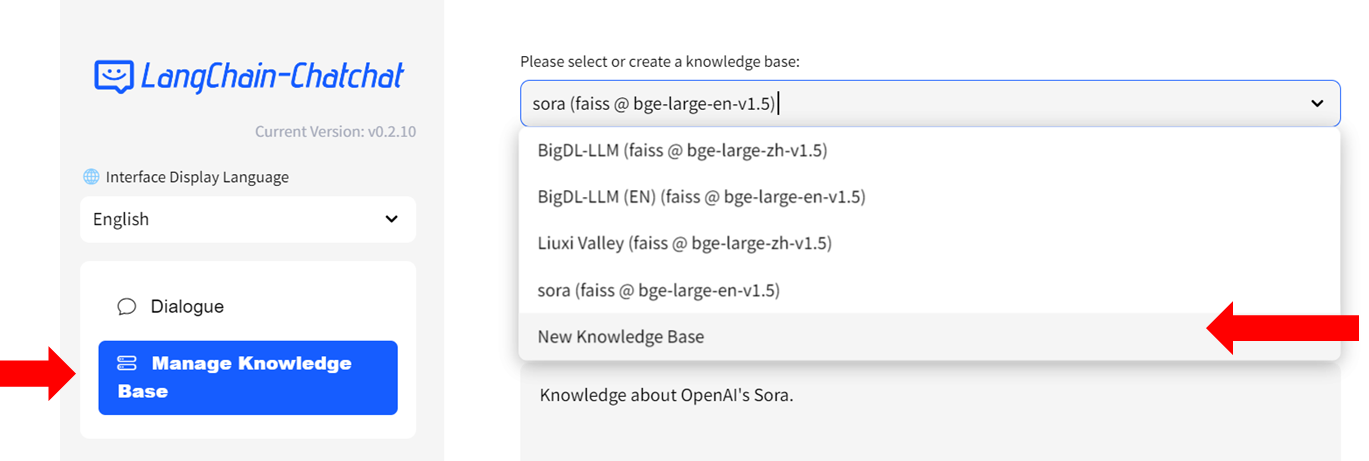

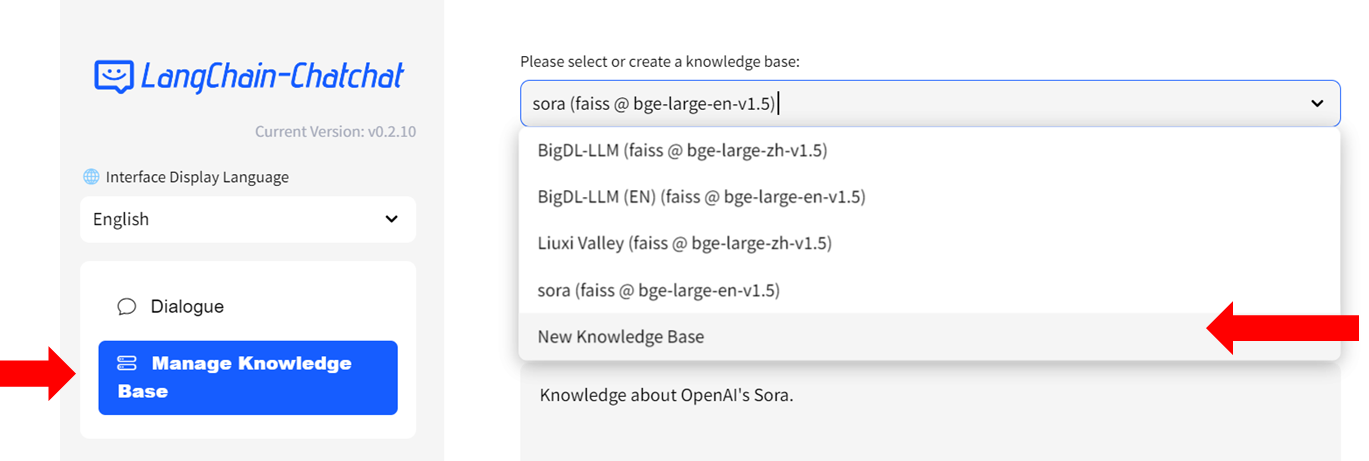

+- Select `Manage Knowledge Base` from the menu on the left, then choose `New Knowledge Base` from the dropdown menu on the right side.

+

+

+## Quickstart

+

+

+

+### Install and Run

+

+ Follow the guide that corresponds to your specific system and GPU type from the links provided below:

+

+- For systems with Intel Core Ultra integrated GPU: [Windows Guide](./INSTALL_win_mtl.md)

+- For systems with Intel Arc A-Series GPU: [Windows Guide](./INSTALL_windows_arc.md) | [Linux Guide](./INSTALL_linux_arc.md)

+- For systems with Intel Data Center Max Series GPU: [Linux Guide](./INSTALL_linux_max.md)

+

+

+### How to use RAG

+

+#### Step 1: Create Knowledge Base

+

+- Select `Manage Knowledge Base` from the menu on the left, then choose `New Knowledge Base` from the dropdown menu on the right side.

+

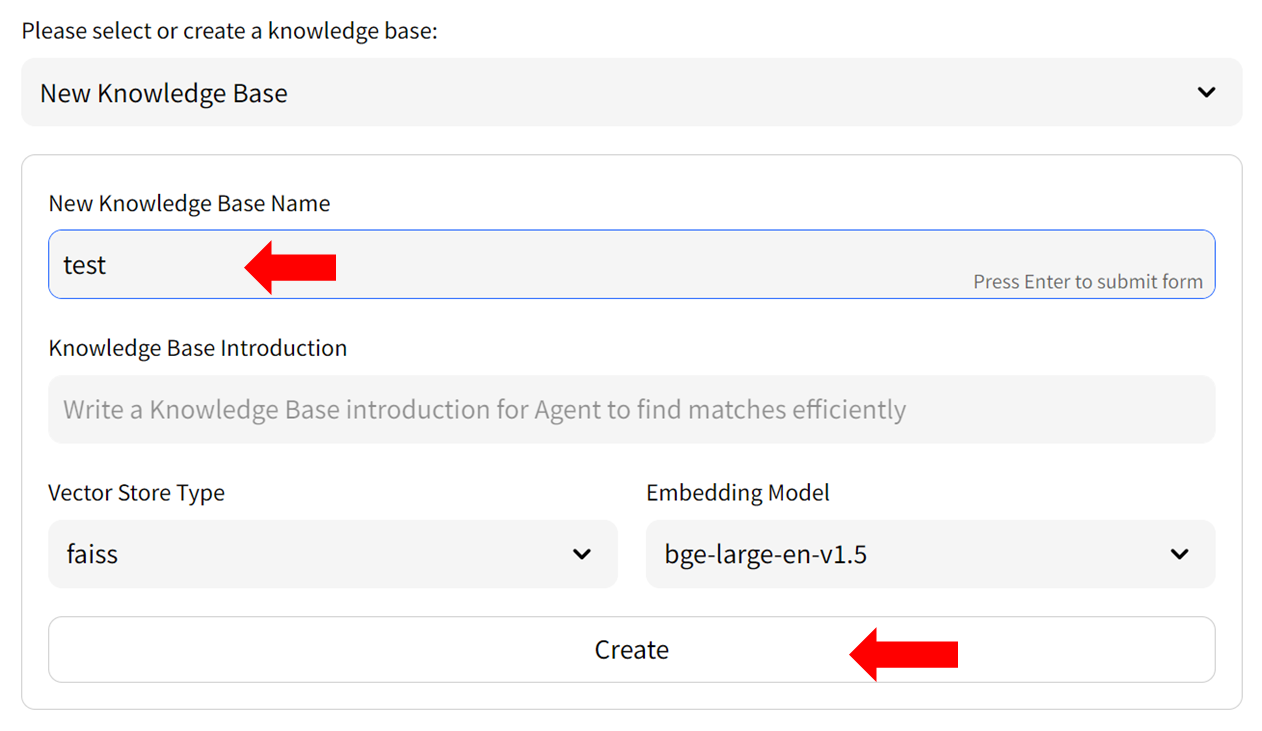

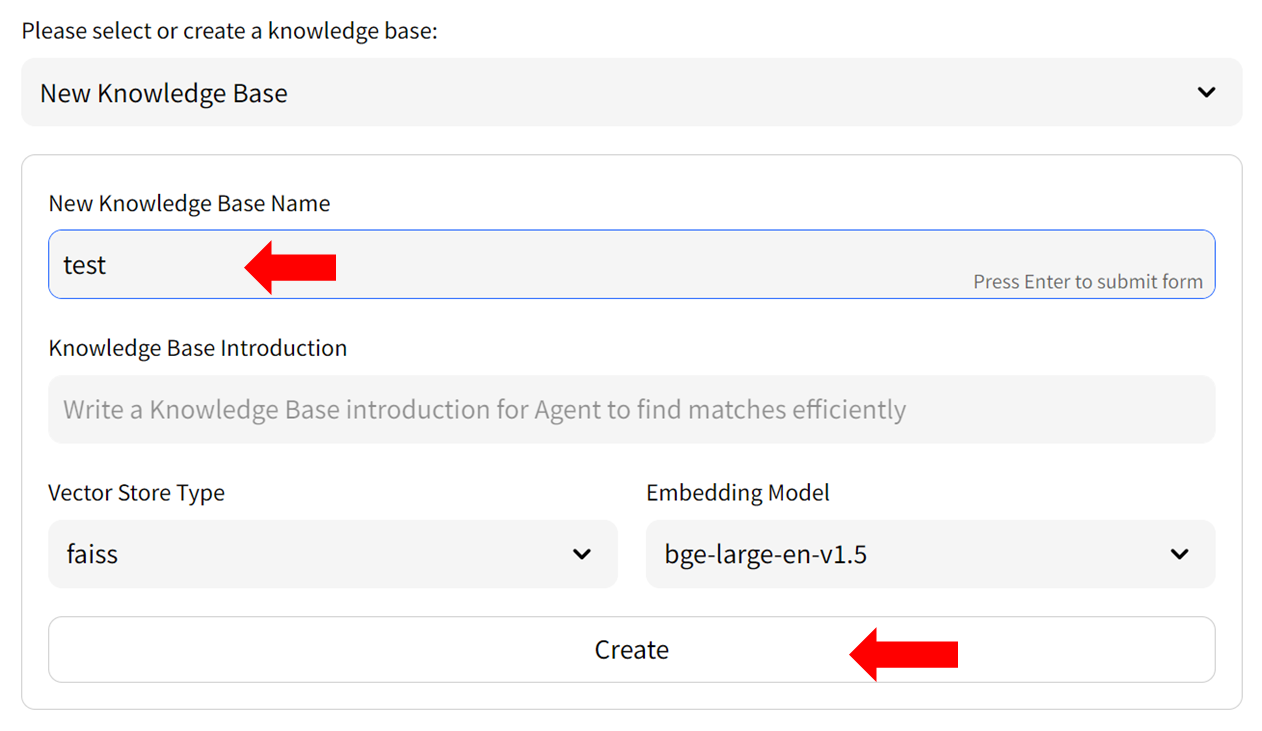

+- Fill in the name of your new knowledge base (example: "test") and press the `Create` button. Adjust any other settings as needed.

+

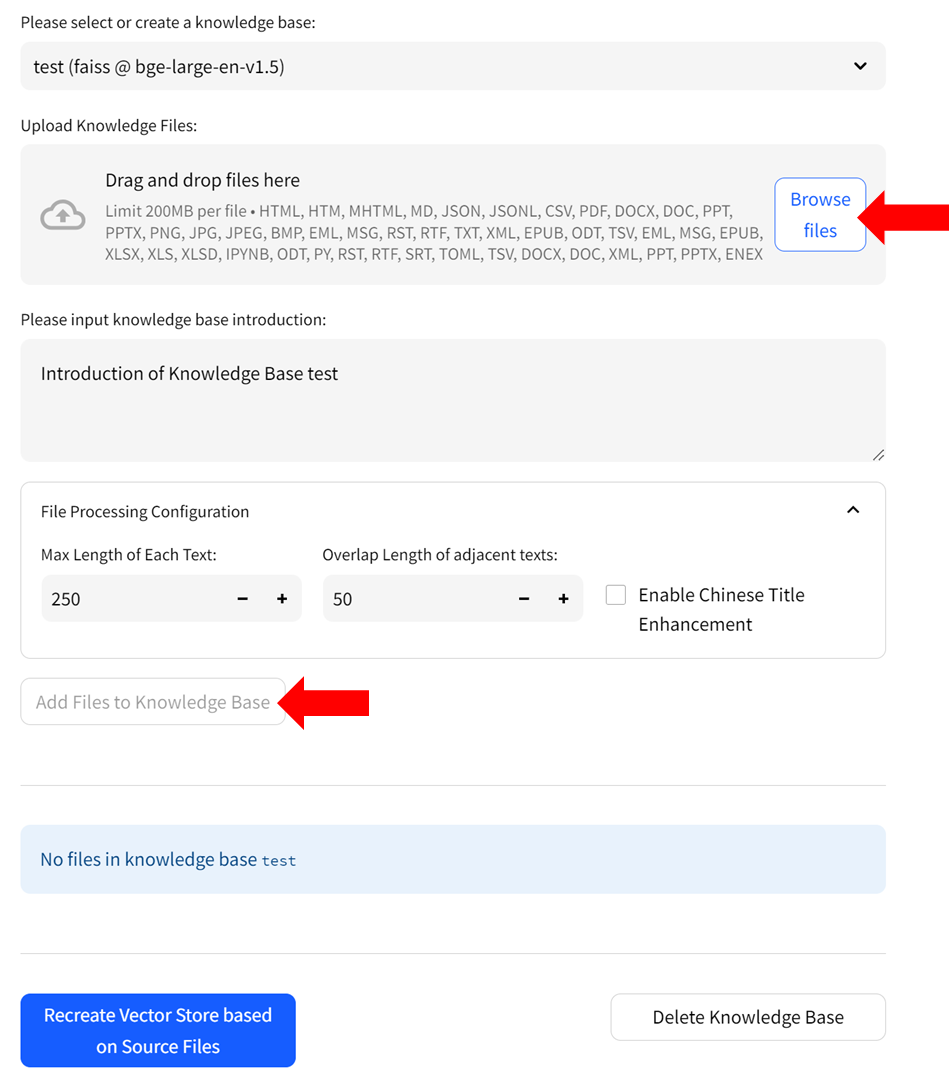

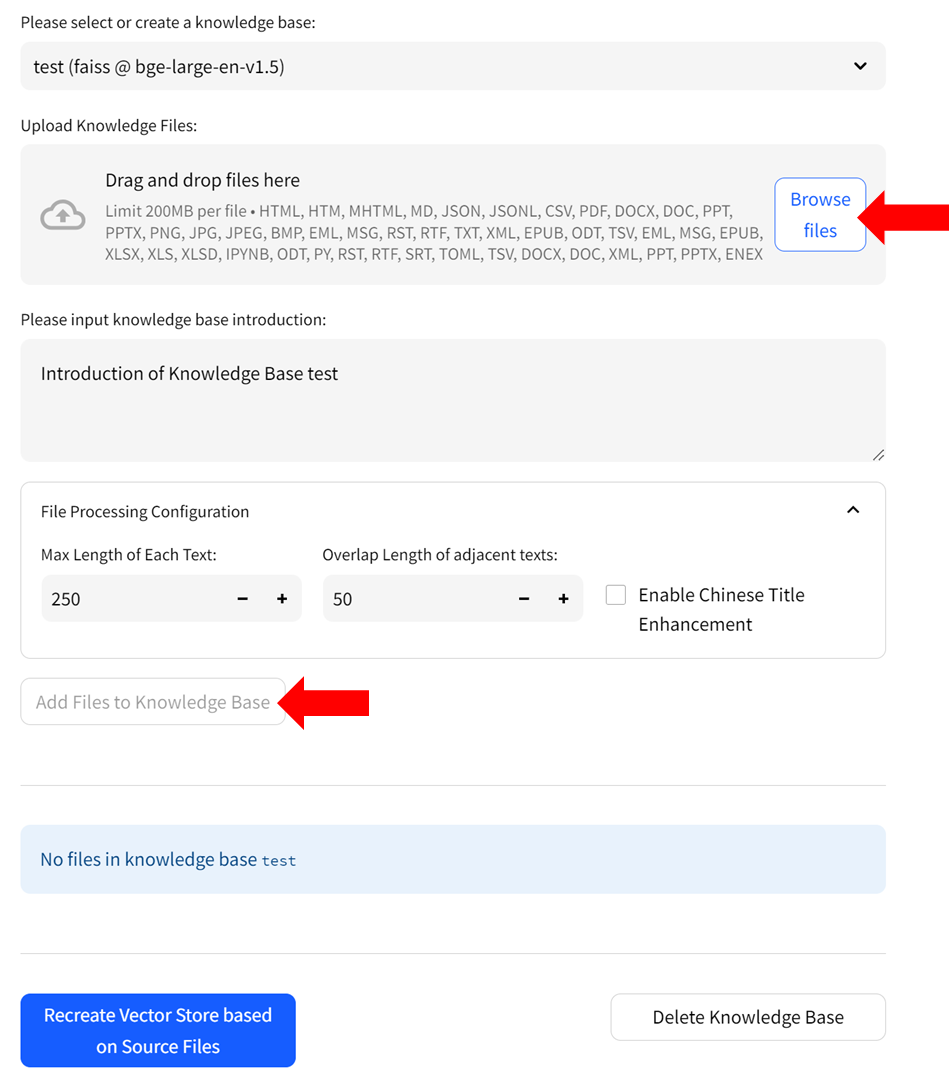

+- Upload knowledge files from your computer and allow some time for the upload to complete. Once finished, click on `Add files to Knowledge Base` button to build the vector store. Note: this process may take several minutes.

+

+

+

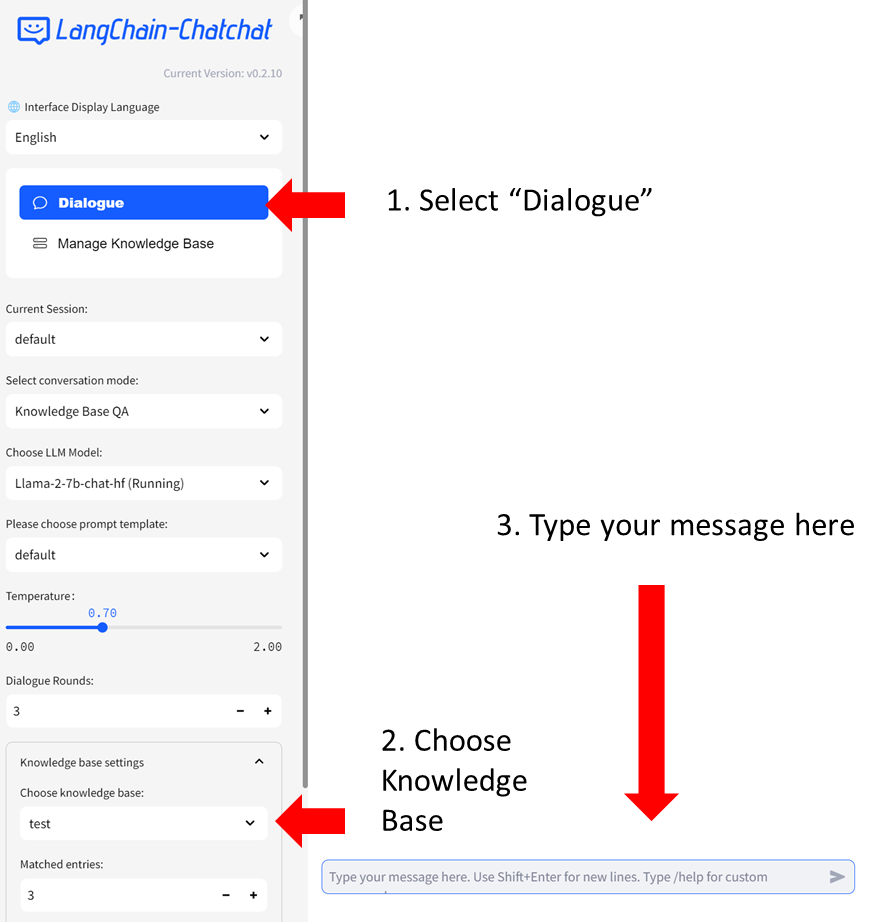

+#### Step 2: Chat with RAG

+

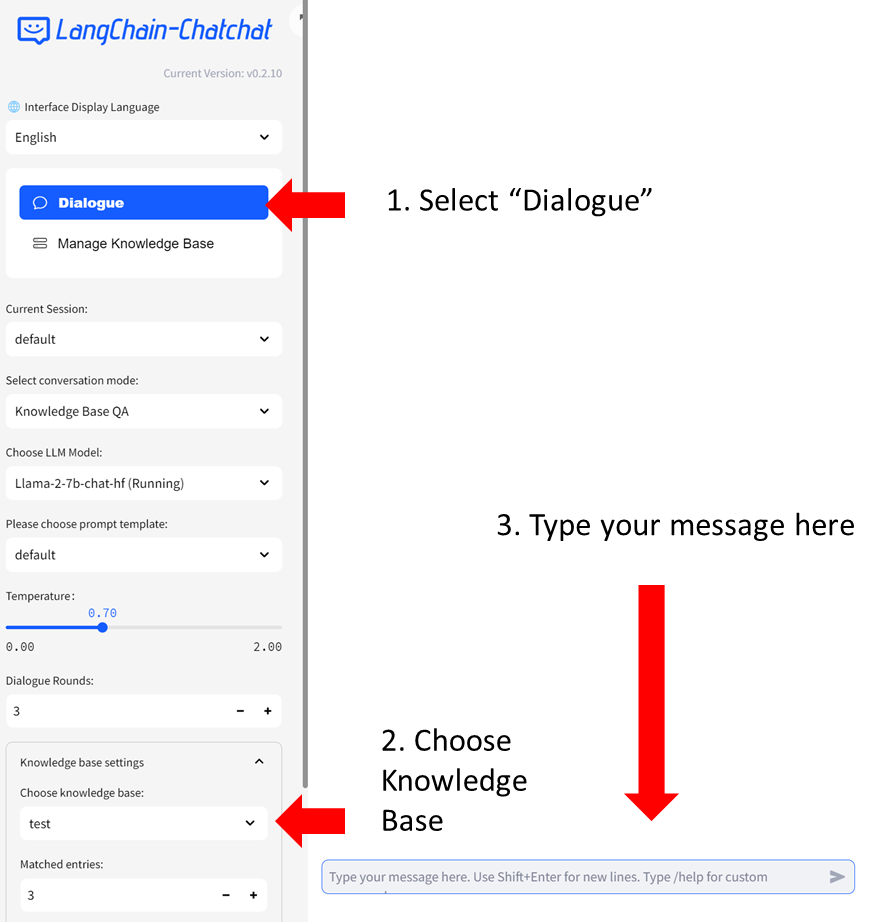

+You can now click `Dialogue` on the left-side menu to return to the chat UI. Then in `Knowledge base settings` menu, choose the Knowledge Base you just created, e.g, "test". Now you can start chatting.

+

+

+

+

+

+For more information about how to use Langchain-Chatchat, refer to Official Quickstart guide in [English](./README_en.md), [Chinese](./README_chs.md), or the [Wiki](https://github.com/chatchat-space/Langchain-Chatchat/wiki/).

+

+

+

+### Trouble Shooting & Tips

+

+#### 1. Version Compatibility

+

+Ensure that you have installed `ipex-llm>=2.1.0b20240327`. To upgrade `ipex-llm`, use

+```bash

+pip install --pre --upgrade ipex-llm[xpu] -f https://developer.intel.com/ipex-whl-stable-xpu

+```

+

+#### 2. Prompt Templates

+

+In the left-side menu, you have the option to choose a prompt template. There're several pre-defined templates - those ending with '_cn' are Chinese templates, and those ending with '_en' are English templates. You can also define your own prompt templates in `configs/prompt_config.py`. Remember to restart the service to enable these changes.

diff --git a/docs/readthedocs/source/doc/LLM/Quickstart/index.rst b/docs/readthedocs/source/doc/LLM/Quickstart/index.rst

index 7eade6a1..ae280e3e 100644

--- a/docs/readthedocs/source/doc/LLM/Quickstart/index.rst

+++ b/docs/readthedocs/source/doc/LLM/Quickstart/index.rst

@@ -13,8 +13,9 @@ This section includes efficient guide to show you how to:

* `Install IPEX-LLM on Windows with Intel GPU <./install_windows_gpu.html>`_

* `Install IPEX-LLM in Docker on Windows with Intel GPU <./docker_windows_gpu.html>`_

* `Run Performance Benchmarking with IPEX-LLM <./benchmark_quickstart.html>`_

-* `Run Code Copilot (Continue) in VSCode with Intel GPU <./continue_quickstart.html>`_

+* `Run Langchain-Chatchat (RAG Application) on Intel GPU <./chatchat_quickstart.html>`_

* `Run Text Generation WebUI on Intel GPU <./webui_quickstart.html>`_

+* `Run Code Copilot (Continue) in VSCode with Intel GPU <./continue_quickstart.html>`_

* `Run llama.cpp with IPEX-LLM on Intel GPU <./llama_cpp_quickstart.html>`_

.. |bigdl_llm_migration_guide| replace:: ``bigdl-llm`` Migration Guide

+

+## Quickstart

+

+

+

+### Install and Run

+

+ Follow the guide that corresponds to your specific system and GPU type from the links provided below:

+

+- For systems with Intel Core Ultra integrated GPU: [Windows Guide](./INSTALL_win_mtl.md)

+- For systems with Intel Arc A-Series GPU: [Windows Guide](./INSTALL_windows_arc.md) | [Linux Guide](./INSTALL_linux_arc.md)

+- For systems with Intel Data Center Max Series GPU: [Linux Guide](./INSTALL_linux_max.md)

+

+

+### How to use RAG

+

+#### Step 1: Create Knowledge Base

+

+- Select `Manage Knowledge Base` from the menu on the left, then choose `New Knowledge Base` from the dropdown menu on the right side.

+

+

+## Quickstart

+

+

+

+### Install and Run

+

+ Follow the guide that corresponds to your specific system and GPU type from the links provided below:

+

+- For systems with Intel Core Ultra integrated GPU: [Windows Guide](./INSTALL_win_mtl.md)

+- For systems with Intel Arc A-Series GPU: [Windows Guide](./INSTALL_windows_arc.md) | [Linux Guide](./INSTALL_linux_arc.md)

+- For systems with Intel Data Center Max Series GPU: [Linux Guide](./INSTALL_linux_max.md)

+

+

+### How to use RAG

+

+#### Step 1: Create Knowledge Base

+

+- Select `Manage Knowledge Base` from the menu on the left, then choose `New Knowledge Base` from the dropdown menu on the right side.

+